How to Evaluate If Your Product Is Ready for AI (Before You Invest in Development)

Key Takeaways

Before you dive in, here’s what this blog will help you walk away with:

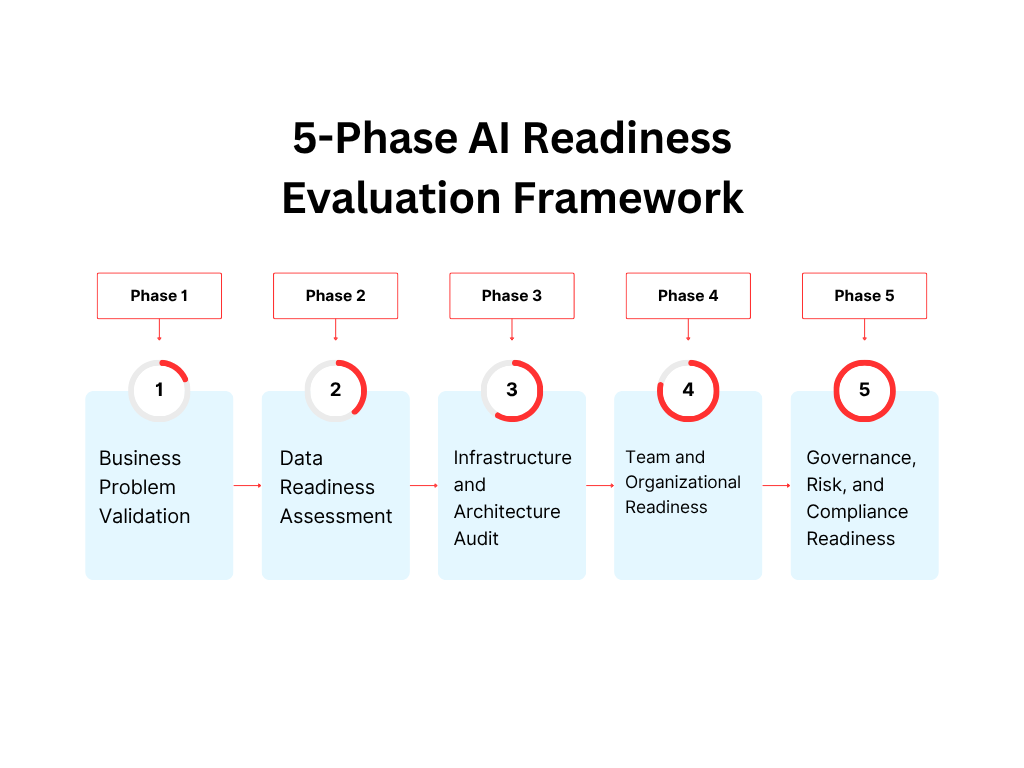

- AI readiness is a structured evaluation, not a gut feeling or a competitive reflex. There are five measurable phases, and each has clear pass/fail signals.

- Most products that fail at AI fail in Phase 1 or Phase 2, either have a business problem definition or data quality issues.

- Data preparation consumes 60–80% of an AI project’s timeline, but most project plans budget 15% for it.

- Integrating AI into a product that isn’t ready accelerates existing problems, at scale, with less control.

- You don’t need a perfect product to start. You need a clear-eyed evaluation of where you stand and a plan to close gaps before development begins.

- The five phases to evaluate: Business Problem Validation → Data Readiness → Infrastructure Audit → Team & Org Readiness → Governance and Risk.

You’ve got buy-in. You’ve got a budget. Someone in leadership has said the words “we need to add AI” and now it’s on you to figure out what that actually means.

Here’s the problem no one talks about loudly enough: most AI projects fail not because the product wasn’t ready for it. Teams rush from roadmap to RFP without ever stopping to ask whether their data is usable, their infrastructure is compatible, or their team is capable of maintaining what they’re about to build.

A 2024 McKinsey report found that only 11% of companies have successfully scaled AI beyond pilot projects. The remaining 89% stall mid-build, pivot after launch, or spend six-figure budgets on features users never adopt. That’s a readiness problem.

As an AI App Development Company in USA we have seen this a lot, and trust us, it’s entirely avoidable.

If you’re a CTO, product lead, or founder about to greenlight an AI development investment, this blog is written specifically for you. Not for someone who wants a surface-level overview of “AI trends” but for someone who needs a real, structured answer to the question: is my product actually ready for this?

What Is AI Readiness?

Before running any evaluation, align on what you’re actually measuring.

AI readiness is the degree to which your product, data, infrastructure, and team are structurally capable of absorbing AI without breaking what already works or delivering outputs users can actually trust.

It is not:

- Whether your competitors are shipping AI features

- Whether your roadmap says “AI” in Q3

- Whether your team has experimented with ChatGPT or Copilot

Gartner defines AI readiness across four pillars: data maturity, organizational capability, technology infrastructure, and governance. For product teams, this needs to be operational and phase-structured. Something you can actually walk through, not just read about.

For a broader view of what AI readiness looks like across industries in 2026, this article is worth reading before you begin.

Why Evaluation Comes Before Everything Else

The financial argument for this is straightforward.

- Forrester estimates that poorly scoped AI projects cost enterprises between $1.5M–$4M in wasted development spend per year

- IBM’s 2023 Global AI Adoption Index found 35% of companies cited limited AI skills as the top barrier

- MIT Sloan (2023) found that companies that ran structured readiness assessments before building reported 2.5x higher deployment success rates

The cost of a proper evaluation? Four to six weeks of structured internal analysis. The cost of skipping it? Months of rebuilding.

The 5-Phase AI Readiness Evaluation Framework

This is the core of this blog. Work through each phase sequentially, not aspirationally. Score yourself honestly at each stage before moving to the next.

Phase 1: Business Problem Validation

What you’re evaluating: Whether AI is the right solution and not just a compelling one.

This is the phase most teams skip because it feels obvious. It isn’t. The most expensive AI mistake is solving a vague problem with a sophisticated tool.

How to evaluate:

Start by writing a one-sentence problem statement. It must include a measurable outcome. Examples of strong problem statements:

- “Our support team handles 4,200 tickets/month. 60% are repetitive queries that follow predictable resolution paths, and resolution time averages 8 minutes per ticket.”

- “Our onboarding drop-off is at step 3. 43% of users who reach that screen never complete setup.”

- “Our internal search returns irrelevant results for 38% of queries despite structured metadata.”

Each of these has a defined scope, a measurable baseline, and a clear intervention point for AI.

Questions to answer at this phase:

- Can this problem be solved without AI with a rule-based system, better UX, or process change?

- If yes, why is AI still the right approach?

- What does “success” look like 90 days post-launch in numbers?

- Who is the internal owner of this problem, and do they have budget authority?

Phase 1 red flags:

- The problem statement includes the words “smarter” or “better” without a metric

- No one can define what a failed AI implementation looks like

- The primary driver is competitive pressure, not user or operational need

Phase 1 pass criteria: You have a one-sentence problem statement, a baseline metric, and a defined success threshold. If you don’t have these, stop here and do the discovery work first.

Phase 2: Data Readiness Assessment

What you’re evaluating: Whether your product has the data infrastructure AI needs to function reliably.

AI systems are only as reliable as the data they train or operate on. This phase is where the majority of products discover they’re 6–12 months away from being truly ready.

How to evaluate:

Volume and history

- Do you have at least 12–18 months of user interaction data?

- Is this data consistently collected, or are there gaps, resets, or migrations that created breaks in continuity?

Quality and structure

- Is your data labeled, structured, and accessible via API or a queryable data warehouse?

- What percentage of your key user events are tracked? If it’s below 70%, your data has gaps too significant for reliable model training.

Domain specificity

- Is your data domain-specific enough to fine-tune a model, or will you rely entirely on general-purpose LLMs?

- General-purpose models are powerful but not context-aware. A fintech platform, healthcare product, or B2B SaaS tool will produce lower-quality outputs with generic models unless retrieval or fine-tuning is applied.

Privacy and compliance

- Does your data contain PII that would restrict its use in model training or API calls?

- Do you have a documented consent and data governance framework?

- Are there regional compliance requirements (GDPR, HIPAA, DPDP Act) that affect where and how data can be processed?

Phase 2 scoring guide:

Criteria | Ready | Needs Work | Blocker |

12+ months structured data | ✓ | 6–12 months | <6 months |

Event tracking coverage | >70% | 50–70% | <50% |

Privacy/compliance framework | In place | Partial | None |

Data accessible via API/warehouse | Yes | Partial | Siloed/manual |

Phase 2 pass criteria: You score “Ready” or “Needs Work” across all four rows. A single “Blocker” row means your data layer needs dedicated work before integrating AI makes sense.

Phase 3: Infrastructure and Architecture Audit

What you’re evaluating: Whether your existing technical stack can absorb AI without significant re-architecture.

This is where AI tech consulting integration with existing systems adds the most value early on. Technical compatibility issues discovered mid-development are disproportionately expensive to resolve.

How to evaluate:

Architecture pattern

- API-first or microservices architectures handle AI integration with far less friction than monoliths

- If you’re running a monolith, you’re not blocked, but plan for 2–3 months of infrastructure prep before meaningful AI development begins

Cloud and compute

- Does your infrastructure support model hosting, or will you rely on third-party API calls (e.g., OpenAI, Gemini, Anthropic)?

- Can your current cloud setup handle the latency and throughput requirements of real-time AI inference?

- Do you have auto-scaling in place for variable inference load?

Observability and logging

- AI systems require more granular observability than traditional software

- Do your current logging pipelines capture the inputs, outputs, and confidence signals needed to monitor model behavior post-launch?

- Without this, you cannot detect model drift, and AI outputs degrade over time

Integration pattern selection: Understanding how to integrate AI into an app requires choosing the right pattern upfront:

- Real-time API calls: best for conversational features, on-demand generation, and chatbot interfaces

- Batch inference: best for background enrichment like tagging, scoring, or classification

- On-device models: best for latency-sensitive or offline-capable mobile features

- RAG (Retrieval-Augmented Generation): best when your product has proprietary knowledge bases that users need to query

Each pattern has different infrastructure requirements. Choosing the wrong one means rebuilding the integration layer.

Phase 3 pass criteria: Your architecture supports API-level AI integration, your cloud can handle inference load, and you have or can build the observability layer needed for post-launch monitoring.

Phase 4: Team and Organizational Readiness

What you’re evaluating: Whether the humans responsible for building, shipping, and maintaining this AI system are equipped to do so.

This is the most underestimated phase. Product readiness includes both the people and the product.

How to evaluate:

Technical skills gap analysis

- Does your team have experience with prompt engineering, model evaluation, or MLOps?

- Can your engineers integrate and test third-party AI APIs without dedicated support?

- Do you have a data scientist or ML engineer, or will this be entirely API-driven?

Ownership and accountability structure

- Is there a named owner for AI quality post-launch?

- Who is accountable when the model produces a wrong, misleading, or harmful output?

- Is there a defined escalation path for AI-related incidents?

Process readiness

- Do you have a feedback loop to capture user corrections, flag bad outputs, and retrain or adjust behavior over time?

- Is your QA process adapted for probabilistic outputs, not just pass/fail unit tests?

Tips for integrating generative AI software in existing design workflows apply here, too. Design teams need:

- Defined states for AI loading, failure, uncertainty, and correction in the design system

- Prompt versioning discipline to prevent design inconsistency from prompt drift

- Parallel review processes where AI-generated outputs are reviewed alongside human-designed alternatives

- Agreed fallback behavior: low-confidence AI outputs should default to human-designed responses, not raw model outputs

Phase 4 red flags:

- No one on the team has shipped an AI feature in production before

- QA processes haven’t been updated to handle non-deterministic outputs

- There’s no defined owner for AI quality or model behavior

Phase 4 pass criteria: At a minimum, you have a named AI feature owner, a feedback loop plan, and either an internal capability or a committed external partner who brings the gap skills. If the latter, this 2026 guide to hiring AI developers covers exactly what to look for.

Phase 5: Governance, Risk, and Compliance Readiness

What you’re evaluating: Whether your organization has the guardrails in place to ship AI responsibly and absorb the risk if something goes wrong. As AI without governance is a liability.

How to evaluate:

Output governance

- What review process exists before AI-generated content reaches users?

- Are confidence thresholds defined below which the system falls back to a human default?

- Is there a content policy that defines what the AI should never output?

Bias and fairness audit

- Has the training data been reviewed for demographic or behavioral bias?

- Are there test sets that specifically probe for discriminatory or unfair outputs?

Regulatory exposure

- Does your product operate in a regulated vertical (healthcare, finance, legal, education)?

- Have you mapped AI feature behavior against applicable regulations: EU AI Act, HIPAA, DPDP, FINRA?

Incident and rollback plan

- Can you disable or roll back AI features independently of a full product release?

- Is there a defined process for responding to a public-facing AI incident?

Phase 5 pass criteria: You have documented output governance, a defined fallback policy, and regulatory exposure has been mapped. For products in regulated verticals, this phase may require legal review before development begins.

Before any production deployment, this AI system audit guide should be your pre-launch checklist.

Your Overall Readiness Score

After running all five phases, score yourself:

Phase | Status |

Phase 1: Business Problem Validation | Pass / Needs Work / Blocked |

Phase 2: Data Readiness | Pass / Needs Work / Blocked |

Phase 3: Infrastructure & Architecture | Pass / Needs Work / Blocked |

Phase 4: Team & Organizational Readiness | Pass / Needs Work / Blocked |

Phase 5: Governance & Risk | Pass / Needs Work / Blocked |

5 Pass: You’re ready. Brief your development partner and move into sprint planning.

3–4 Pass, rest Needs Work: You’re 4–8 weeks from readiness. Address the gaps in parallel and set a start date.

Any Blocked: Stop. Fix the blocker before spending development budget. One blocked phase can invalidate the entire build.

When to Bring In an AI Tech Consulting Partner

You don’t always need consulting, but certain signals make it the right call before vendor selection:

- Your architecture hasn’t been evaluated for AI compatibility

- You’re unsure whether to build custom models or use API-based services

- Compliance or data residency requirements affect model selection

- Your team has no prior experience shipping AI in production

AI tech consulting integration with existing systems is especially valuable for enterprises on legacy infrastructure. A good consultant maps your current state, surfaces integration constraints before they become expensive, and gives you an informed architecture decision rather than a vendor recommendation.

SMBs face this too often with leaner teams and tighter timelines. This blog covers how SMBs can integrate AI into existing software systems with practical, resource-conscious approaches.

If you’re building on SaaS infrastructure, this guide on building AI-powered SaaS applications is worth reading alongside the evaluation you’ve just completed.

Choosing the Right Development Partner Post-Evaluation

Once your readiness score is clear, partner selection becomes significantly easier because you know exactly what gaps need to be filled externally.

An experienced AI powered mobile app development company brings more than engineering capacity. Look for:

- A structured pre-development discovery process

- Model evaluation frameworks for selecting the right AI for your specific use case

- Production-level AI observability and post-launch monitoring capability

- Proven case studies with measurable outcomes, not just “we shipped it.”

An AI chatbot app development company with real enterprise deployments will save you 3–4 months of prompt engineering mistakes, conversation design errors, and fallback logic gaps, because they’ve made those mistakes on their own previous projects, not yours.

Final Word

The companies that win with AI in 2026 won’t be the ones who moved fastest. They’ll be the ones who evaluated honestly, prepared deliberately, and built from a stable foundation.

Running this five-phase evaluation before you brief any AI app development company in USA is not a delay. It is the investment. The teams that skip it spend the next 12 months paying for that shortcut.

Evaluate now. Build right. And when you’re ready, build with partners who’ve done this before.

At Tech Exactly, we work with product teams at exactly this stage before a line of code is written. As an AI powered mobile app development company, we run structured pre-development readiness assessments that cover all five phases: problem validation, data audits, architecture reviews, team gap analysis, and governance mapping. We’ve helped startups, SMBs, and enterprise teams identify what’s actually blocking them and build a development roadmap that’s grounded in their real current state.

Whether you need a full readiness audit, help scoping your first AI feature, or an end-to-end development partner who doesn’t disappear after launch, that’s what we do.

If you’re at the stage where AI feels inevitable but the path forward isn’t clear yet, let’s start with a conversation.

Let's Start Your Project Today

Ready to build your App with us? Reach out now – our experts are just one click away.

Frequently Asked Questions

Four to six weeks for a thorough five-phase assessment. Phase 2 (data readiness) is typically the longest. Teams that compress it to two weeks almost always rediscover skipped gaps mid-development at a much higher cost.

Digital transformation is about modernizing processes broadly. AI readiness is more specific; it evaluates whether your data, architecture, team, and governance can handle probabilistic, model-driven systems. A product can be digitally mature and still score low on AI readiness.

Bring in an external partner when:

- Your team has no prior experience shipping AI in production

- Your timeline can't absorb an internal learning curve

- Your use case requires specialized architecture like a production-grade AI chatbot or an ML recommendation engine

An experienced AI powered mobile app development company compresses timelines and brings patterns your team would otherwise learn the hard way.

Phase 2 – data readiness. Teams consistently overestimate data quality. The most common discoveries: 30–40% of key events are untracked, data is siloed across systems, or PII restrictions block model training. Solvable, but only if caught before development starts.

Yes, with the right approach. Limited proprietary data calls for:

- API-based LLM integrations (OpenAI, Gemini, Anthropic) over custom model training

- RAG (Retrieval-Augmented Generation) to ground outputs in your existing content

- Tight prompt engineering to compensate for the absence of fine-tuning

Match the AI method to where your data actually is, not where you project it will be.

Yes. For existing products, Phase 3 (infrastructure audit) carries the most weight; your architecture may not be AI-compatible. For AI-native builds, Phase 1 and Phase 2 are make-or-break early. In both cases, Phase 5 (governance) is non-negotiable before any public-facing AI output ships.

Pallabi Mahanta, Senior Content Writer at Tech Exactly, has over 5 years of experience in crafting marketing content strategies across FinTech, MedTech, and emerging technologies. She bridges complex ideas with clear, impactful storytelling.