Building AI-Powered SaaS Applications: Architecture, APIs & Deployment Strategy

Key Takeaways

- The global AI in SaaS market is projected to exceed $793.10 billion by 2029, growing at a (CAGR 2026-2030) of 14.71%. (Statista)

- Over 80% of new SaaS apps launched in 2025 incorporated at least one AI-powered capability, up from just 20% in 2021.

- The most common AI architecture pattern in SaaS application development today is Retrieval-Augmented Generation (RAG), which combines LLMs with proprietary data to produce accurate, grounded outputs.

- Inference cost management is a first-class engineering concern, not an afterthought. Teams that get this wrong face margin collapse at scale.

- Partnering with an experienced AI app development company in USA gives you architectural clarity, faster iteration, and production-grade infrastructure from day one.

What Is AI in SaaS?

AI in SaaS refers to the integration of artificial intelligence capabilities such as natural language processing, predictive analytics, computer vision, and generative AI directly into cloud-based software-as-a-service products. Rather than being a standalone feature, AI in modern SaaS apps is woven into the core product experience: it personalizes interfaces, automates decisions, surfaces insights, and enables products to improve with use.

Think of a traditional SaaS product as a reliable car; it gets you from A to B every single time. An AI-powered SaaS product is like a self-driving car that learns your daily commute, predicts traffic, and reroutes automatically. The engine underneath is the same cloud infrastructure, but the intelligence on top completely transforms what the product can do.

Unlike early AI features that were bolt-on additions, say a chatbot in the corner, a basic recommendation widget, today’s AI in SaaS is architecturally different at its core. It is built around data pipelines, model inference layers, and continuous feedback loops that make the product smarter with every interaction.

Gartner’s John-David Lovelock, Distinguished VP Analyst, states: “AI infrastructure growth remains rapid despite concerns about an AI bubble, with spending rising across AI‑related hardware and software.”

This is precisely why founders are increasingly working with a dedicated AI powered mobile app development company rather than generalist dev shops. The architectural decisions at this stage have long-term consequences.

What Does This Mean for SaaS Builders in 2026?

The barriers to building AI-powered SaaS have collapsed. Pre-trained foundation models, open APIs from providers like OpenAI and Anthropic, and cloud-native ML infrastructure mean that a small engineering team can now ship capabilities that previously required a dedicated research lab.

- Speed to AI: You don’t need to train models from scratch. APIs like GPT-4o, Claude, and Gemini give you world-class language intelligence as a single API call.

- Differentiation through data: The winning edge is no longer the mode. It’s the proprietary data you feed it. Your RAG pipeline, fine-tuning strategy, and feedback loops are where defensibility is built.

- Expectations have shifted: Users who experience an AI-powered product from a competitor will not accept a non-AI alternative in the same category. AI is no longer a premium-tier feature but almost a baseline expectation.

What’s the Current State of AI-Powered SaaS?

The AI in SaaS market is redefining what software means. According to Grand View Research, the global AI in SaaS market is projected to exceed USD 3,497.26 billion by 2033, expanding at a CAGR of 30.6% from 2026 to 2033, driven by enterprise adoption across every vertical from healthcare and FinTech to logistics and legal.

IDC estimates enterprise spending on AI-enabled SaaS application development will surpass $30 billion annually by 2027.

What This Growth Actually Means

The numbers tell one story; the product landscape tells another. The most consequential shift is not the number of AI features being shipped; it is where AI sits in the product architecture. The companies pulling ahead are those who designed for AI from day one: proprietary data pipelines, model inference layers built into their core backend, and feedback loops that compound the value of the SaaS product over time.

The companies falling behind are those that are retrofitting AI onto products built for a pre-AI world, adding GPT integration as a feature, rather than rebuilding the product experience around intelligence.

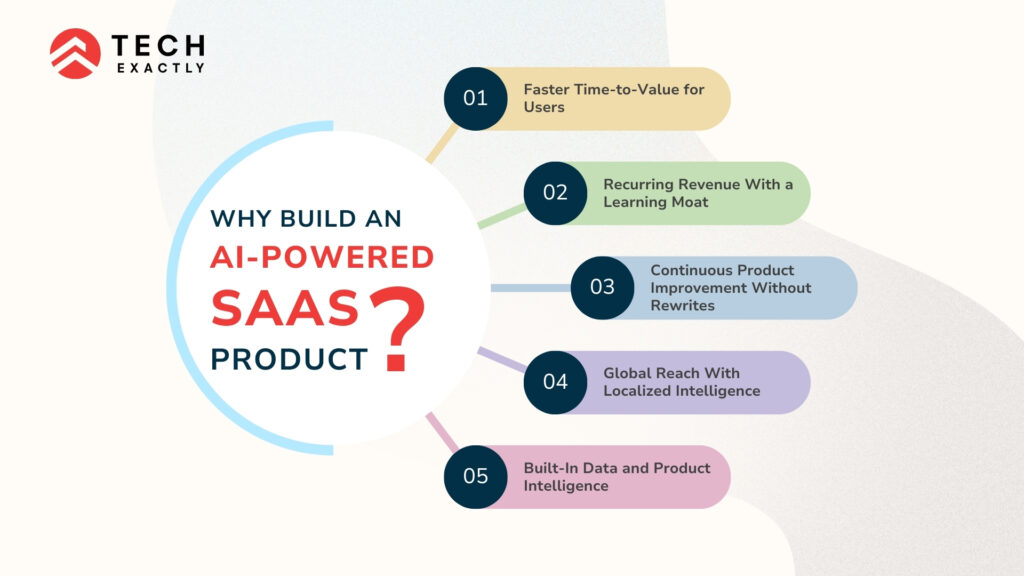

Why Build an AI-Powered SaaS Product?

The business case for building an AI-powered SaaS product has never been clearer. Whether you’re a founder designing from scratch or an engineering team modernizing an existing platform, embedding AI into your SaaS application development process is a survival requirement. The five advantages below explain why the most competitive products in every category are being built with AI at their core, not as an afterthought.

1. Faster Time-to-Value for Users

AI automates repetitive, high-friction workflows that previously required manual effort. Whether it’s document processing in a legal SaaS app, intake triage in a healthcare product, or lead scoring in a CRM, AI compresses the time between a user signing up and experiencing meaningful value. This is the single biggest driver of SaaS retention.

2. Recurring Revenue With a Learning Moat

Standard SaaS products are sticky because of switching costs. AI SaaS products are sticky because they get genuinely better the longer a customer uses them. The model learns their data, the recommendations reflect their patterns, and the outputs are calibrated to their context. This learning moat is extraordinarily difficult for a competitor to replicate, even if they build the same features.

3. Continuous Product Improvement Without Rewrites

AI products evolve through data, not code. When user behavior signals that a model’s outputs are missing the mark, you retrain or fine-tune; you do not rewrite your application logic. This decoupling of intelligence from software allows your SaaS product to improve on a faster cycle than traditional engineering can support.

4. Global Reach With Localized Intelligence

LLMs are inherently multilingual. An AI SaaS product can support dozens of languages from day one without dedicated localization engineering, dramatically reducing the SaaS cost of international expansion and removing language as a barrier to global product-market fit.

5. Built-In Data and Product Intelligence

An AI product generates rich signals about how users think, what they need, and where they get stuck. Prompt patterns, retrieval queries, feedback signals, and model correction data collectively form a product intelligence layer that informs roadmap decisions in ways traditional analytics simply cannot.

Why AI SaaS Is Dominating Software Models

AI SaaS is dominating for a simple structural reason: it creates compounding returns that non-AI SaaS cannot match. Every interaction generates data. Every data point improves the model. Every improvement increases the value delivered per user. Every increase in value drives retention and word-of-mouth. This flywheel — data → intelligence → value → growth → more data — is self-reinforcing in a way that no static software feature set can replicate.

From an investor perspective, SaaS apps built with AI at their core command higher multiples. Products with AI demonstrate higher NPS, lower churn, and stronger net revenue retention. As per sources, AI-native SaaS companies grow annual recurring revenue at a median rate of 100%, more than four times the 23% recorded by traditional SaaS businesses. They also retain and expand revenue more effectively, delivering net dollar retention of 132% compared to 108% for companies that added AI later. The gap isn’t explained by features alone. It comes down to architecture.

AI and SaaS: The Defining Combination of the Next Decade

The combination of SaaS delivery (subscription, cloud-hosted, continuously updated) with AI capabilities (learning, personalizing, automating) creates a product category unlike anything that existed before. This is also why the demand for an AI app development company in USA with genuine SaaS architecture experience has grown sharply. Founders need partners like Tech Exactly, who understand both the product economics of SaaS application development and the infrastructure requirements of production AI systems, not just one or the other.

Core Architecture for AI SaaS Applications

Building AI into a SaaS product is not about bolting a chatbot onto your existing dashboard. It requires deliberate architectural decisions from day one. The best AI SaaS products are built on a layered architecture that keeps business logic, data pipelines, and AI inference cleanly separated.

Think of it like a modern office building. The ground floor handles all visitors: your users and API consumers. The middle floors are where work happens: application logic and AI reasoning. The top floors store everything: your data and trained models. Each floor is connected but independently maintained, which means you can renovate one without tearing down the rest.

Layer | Responsibility | Key Technologies |

Presentation Layer | User interface, dashboards, real-time AI feedback | React, Next.js, Vue.js |

Application Layer | Business logic, auth, multi-tenancy, REST/GraphQL APIs | Node.js, FastAPI, Django |

AI/ML Layer | Model inference, fine-tuning pipelines, RAG, embeddings | Python, LangChain, HuggingFace |

Data Layer | Vector stores, relational DB, event streaming, feature store | PostgreSQL, Pinecone, Kafka |

Multi-Tenancy Considerations

In a multi-tenant AI SaaS product, you must ensure that AI models, particularly those fine-tuned or RAG-augmented with tenant data, never bleed context across customer boundaries.

- Namespace isolation: Use tenant-specific namespaces in vector databases (Pinecone, Weaviate) to prevent cross-tenant data leakage in retrieval pipelines.

- Row-level security (RLS): Implement RLS policies in PostgreSQL or Supabase to enforce data access control at the database level for each tenant.

- Tiered inference queues: Separate model inference queues by subscription tier so that high-volume enterprise tenants don’t degrade performance for smaller accounts.

AI APIs: Choosing and Integrating the Right Ones

Rather than training models from scratch, most SaaS application development teams integrate pre-trained APIs for core capabilities and layer proprietary fine-tuning or RAG on top for differentiation.

One of the fastest-growing integration categories is conversational AI. Working with a specialized AI chatbot app development company like Tech Exactly matters here because the production of chatbot systems inside SaaS products require far more than a simple API call. They need context management, memory persistence, retrieval pipelines, fallback handling, and multi-turn conversation state, all of which must be built with SaaS-grade reliability.

API Category | Popular Options | Best For |

Large Language Models | OpenAI GPT-4o, Anthropic Claude, Gemini 1.5 | Summarization, chat, code generation, analysis |

Embeddings & Semantic Search | OpenAI Embeddings, Cohere Embed, Jina AI | RAG pipelines, semantic search, recommendations |

Vision & Multimodal | GPT-4 Vision, Google Vision AI, Replicate | Document OCR, image classification, UI inspection |

Voice & Audio | Whisper API, ElevenLabs, AssemblyAI | Transcription, voice UI, meeting summaries |

Agentic Frameworks | LangChain, LlamaIndex, AutoGen | Multi-step reasoning, tool use, agent workflows |

API Integration Best Practices for Saas Apps

Abstract behind an internal gateway: Never call OpenAI or Anthropic directly from your frontend. Route all AI calls through a backend gateway that handles retries, rate limiting, logging, and cost attribution per tenant.

Build fallback logic: Design your AI layer to gracefully fall back to an alternative provider if your primary API goes down or exceeds quota, critical for maintaining SLAs at enterprise scale.

Cache aggressively with semantic caching: Tools like GPTCache allow you to skip redundant API calls for near-identical prompts, reducing inference costs by 30–60% in production.

💡 Your AI API gateway is like a currency exchange desk; it accepts requests in many forms, routes them to the best provider at the best rate, and returns a clean, standardized output to your application layer.

Related reading → How to Integrate AI into Your App: Full Guide

Tech Stack for AI-Powered SaaS Applications

There is no universal stack, but there are proven combinations that handle scale, observability, and AI integration elegantly. The following is what the Tech Exactly team recommends for production-grade SaaS application development in 2026.

Category | Technology | Purpose |

Frontend | Next.js 14 (React) | SSR, streaming UI, fast AI response display |

Backend API | FastAPI (Python) | Async-first, ideal for ML-adjacent services |

Auth & Tenancy | Auth0 / Clerk + RLS | Secure per-tenant access control |

AI Orchestration | LangChain + LlamaIndex | Prompt chaining, RAG pipelines, agents |

LLM Provider | OpenAI GPT-4o / Claude | Core language intelligence |

Vector Database | Pinecone / Weaviate | Embedding storage, semantic retrieval |

Relational Database | PostgreSQL (Supabase) | Structured data, RLS, real-time subscriptions |

Event Streaming | Apache Kafka / AWS Kinesis | Real-time ML feature pipelines |

Model Serving | AWS SageMaker / Modal | Scalable custom model inference |

Caching | Redis + GPTCache | Session management, semantic prompt caching |

Observability | LangSmith + Datadog | LLM tracing, cost monitoring, alerting |

Deployment | AWS/GCP + Kubernetes | Container orchestration, autoscaling |

CI/CD | GitHub Actions + ArgoCD | Automated testing, GitOps deployment |

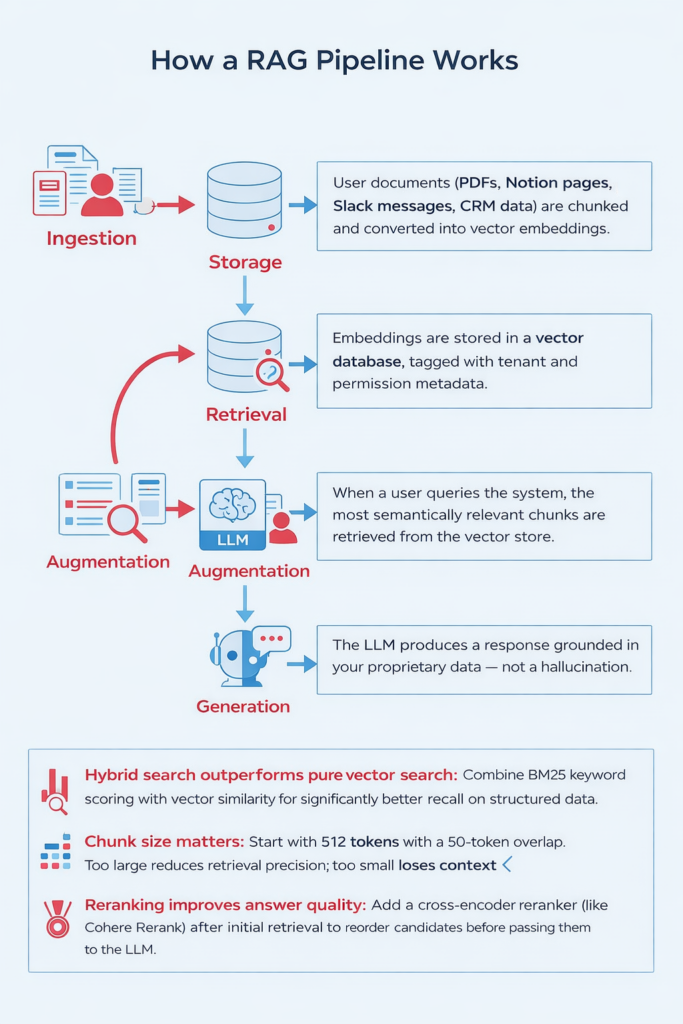

Building the RAG Pipeline: The Heart of Most AI SaaS Products

Retrieval-Augmented Generation (RAG) is the most important architectural pattern in enterprise AI in SaaS today. Instead of relying on what an LLM was trained on months ago, RAG lets you inject real-time, tenant-specific context into every AI response, dramatically improving accuracy and eliminating hallucination on domain-specific queries.

The SaaS deployment strategy for an AI product has more moving parts than a traditional web app. You’re not just deploying code, you’re managing models, embeddings, inference endpoints, and data pipelines simultaneously. Getting SaaS deployment right from the start is what separates teams that scale cleanly from those that firefight production incidents at the worst possible moment.

The Three-Environment Rule

- Development: Local Docker Compose with mocked AI responses and a seeded vector DB, fast iteration without burning API credits.

- Staging: Full cloud infrastructure mirroring production, real LLM calls at reduced scale, with LangSmith tracing enabled on every prompt chain.

- Production: Kubernetes on EKS or GKE with horizontal pod autoscaling, blue/green deployments for zero-downtime model updates, and Datadog APM for full observability.

Model Deployment Patterns

Pattern | When to Use | Trade-offs |

API-only (3rd party LLM) | Early-stage, <$50k ARR, fast iteration | Higher per-call cost, vendor dependency |

Fine-tuned hosted model | Domain-specific accuracy needed, mid-stage | Training cost, better latency |

Self-hosted open-source LLM | High volume, strict data privacy | GPU infra complexity |

Hybrid (API + self-hosted) | Scale stage, cost-performance balance | Highest flexibility, most complex |

Managing AI Infrastructure Costs

SaaS cost management is one of the most under-discussed disciplines in AI product development. Inference costs can spiral quickly, and the companies that scale profitably treat SaaS cost as a first-class engineering concern from day one, not an afterthought discovered during a quarterly cost review.

- Per-tenant usage budgets: Track token consumption per tenant and per feature; enforce soft limits before they reach hard quotas.

- Route by complexity: Not every task needs GPT-4o. Send classification, extraction, and short-form tasks to faster, cheaper models (GPT-4o-mini, Claude Haiku) and reserve premium models for high-stakes reasoning.

- Alert on cost anomalies: A rogue prompt loop or a viral feature can generate thousands of dollars in unexpected API spend overnight. Set monitors on AI cost metrics in Datadog.

Security, Compliance & Responsible AI in SaaS

AI adds a new threat surface to your SaaS apps. Beyond standard OWASP concerns, you now need to defend against prompt injection attacks, data exfiltration through model outputs, and context leakage across multi-tenant boundaries. IBM Security’s 2025 report found that 43% of enterprises reported AI-specific security incidents in their SaaS stack last year.

- Prompt hardening: Validate and sanitize all user inputs before they reach your LLM. Establish strict behavioral guardrails in your system, prompt and enforce structured output schemas.

- Output filtering: Apply output validation layers (Guardrails AI, NeMo Guardrails) to catch PII leakage, off-policy responses, or harmful content before they reach end users.

- Data residency and compliance: For GDPR and HIPAA use cases, ensure user data sent to LLM APIs is either anonymized or routed through providers with appropriate Data Processing Agreements in place.

Related reading → How Healthcare Startups Can Build Regulatory-Compliant Apps Without Slowing Innovation

Case Studies: AI SaaS Products Built by Tech Exactly

Smart Fitness App with Personalized AI Coaching

A fitness platform moved from generic workout templates to a full AI-driven personal trainer experience. By integrating an LLM-powered coaching layer trained on user performance data, the app generates adaptive weekly programs, predicts injury risk, and delivers natural language feedback in real time. Built by Tech Exactly, AI powered mobile app development company, the architecture uses FastAPI, Pinecone for exercise history embeddings, and GPT-4 for plan generation and conversational coaching.

→ Read the full case study: Smart Fitness Workout App That Acts Like Your Personal Trainer

AI-Powered Nature Identification App for 1M+ Global Users

A nature and wildlife platform needed to accurately recognize thousands of plant, bird, and insect species from a single user-uploaded photo at scale, across varied lighting conditions and geographies. Tech Exactly built a computer vision pipeline using a fine-tuned image classification model on scalable cloud inference infrastructure, with a mobile-first frontend optimized for low-bandwidth environments. The AI-powered mobile app handled over one million global users without latency degradation, turning a passive nature guide into a real-time intelligent identification companion.

→ How We Built a Scalable AI-Powered Nature Identification App for 1M+ Global Users

Challenges in AI SaaS Development (2026)

Advanced Data Security & AI-Specific Risk

Prompt injection, model inversion attacks, and cross-tenant context leakage are attack vectors that did not exist in traditional SaaS. Security models need to evolve to account for the probabilistic, generative nature of LLM outputs.

Multi-Tenancy & Data Isolation Complexity

Ensuring that fine-tuned or RAG-augmented AI strictly respects tenant boundaries is significantly harder than traditional row-level security. Namespace isolation in vector stores and inference-level context controls must be designed proactively. This is one of the most underestimated challenges in SaaS application development at the enterprise level.

Subscription Churn & Value Retention

AI features that impress in demos but fail to deliver consistent daily value accelerate churn rather than reducing it. Getting the feedback loop right, so the AI visibly improves over the customer’s lifecycle.

Cloud Cost & AI Infrastructure Management

The economics of AI SaaS apps are fundamentally different from traditional SaaS. Inference costs scale with usage in a way that hosting costs do not. Founders who don’t model SaaS cost into their unit economics early often face margin compression at exactly the moment they achieve growth.

Scalability & Performance at Inference Time

Users expect AI responses in under two seconds. Achieving consistent sub-2s p95 latency at scale requires a combination of caching, model routing, horizontal scaling, and careful prompt engineering, none of which are solved out of the box.

Related reading → Generative AI in Healthcare Development

Future of AI-Powered SaaS: What’s Next?

The next three years will see AI in SaaS evolve from assistive to agentic. Rather than AI that responds to queries, the next generation of SaaS products will include autonomous AI agents that proactively execute multi-step workflows, coordinate across tools, and make decisions within defined operating boundaries, without requiring a user prompt for every action.

Key trends shaping this shift:

- Agentic workflows: Products like Salesforce Agentforce and emerging vertical SaaS platforms are embedding autonomous agents that handle CRM updates, scheduling, document generation, and escalation logic without human intervention.

- Conversational AI as infrastructure: The role of the AI chatbot app development company is expanding from building chat interfaces to building full conversational infrastructure: memory layers, persona management, multi-modal input handling, and stateful session orchestration across the entire product surface.

- On-device AI: For mobile and desktop SaaS apps, edge inference using smaller quantized models will enable offline-capable AI features and eliminate the latency of round-tripping to cloud inference.

- Smarter SaaS deployment: Next-generation SaaS deployment pipelines will include automated model version management, shadow testing of new LLM versions in production traffic, and AI-assisted rollback decisions, bringing the same rigor to model releases that CI/CD brought to code.

- AI-native pricing models: Usage-based pricing tied directly to AI outcomes: tasks completed, time saved, errors prevented, will replace seat-based pricing for a growing category of AI SaaS products.

→ Agentic AI in Healthcare: How Autonomous Systems Are Transforming Patient Care

At Tech Exactly, we help founders navigate every stage of this shift from architecture decisions and API integration to deployment and scale. If you’re building an AI-powered SaaS product and want a technical partner who has done it before, get in touch with us. We’d love to hear what you’re working on.

FAQ

AI-powered SaaS refers to cloud-based software products that integrate machine learning and AI capabilities, such as natural language processing, predictive analytics, or computer vision into their core product experience, rather than as optional add-on features.

SaaS cost varies significantly by scope. An MVP with third-party LLM API integration can be built for $30,000–$80,000. A production-grade AI SaaS with custom fine-tuning, RAG pipelines, and enterprise-grade infrastructure typically ranges from $150,000–$500,000+ depending on complexity and team composition.

A proven combination is Next.js (frontend), FastAPI (backend), PostgreSQL + Pinecone (data), LangChain + OpenAI/Claude (AI layer), deployed on AWS or GCP with Kubernetes. This stack balances developer velocity, AI-native architecture, and production scalability.

RAG (Retrieval-Augmented Generation) is a pattern where your AI retrieves relevant context from your proprietary data before generating a response. It dramatically improves accuracy, reduces hallucination, and allows you to build SaaS apps grounded in your users' actual data, without expensive model retraining.

A US-based AI development partner brings timezone-compatible collaboration, familiarity with US compliance requirements (HIPAA, SOC 2, CCPA), and typically deeper experience with the enterprise SaaS market. For products targeting US enterprise buyers, this alignment often accelerates sales cycles in addition to SaaS application development itself.

Beyond coding ability, look for experience with production conversational AI. Specifically, context window management, multi-turn memory, RAG integration for grounded responses, fallback handling, and latency optimization. A chatbot that works in a demo but fails in production is one of the most common AI project failure modes.

Implement per-tenant token budgets, use model routing to assign simpler tasks to cheaper models, apply semantic caching to reduce redundant API calls, and set up cost anomaly alerting in your observability stack from day one.

Yes, but it requires additional architectural care around data residency, output filtering, auditability, and compliance with regulations like HIPAA, GDPR, and SOC 2. These constraints are solvable, they simply need to be designed in from the start, not bolted on after launch.

Pallabi Mahanta, Senior Content Writer at Tech Exactly, has over 5 years of experience in crafting marketing content strategies across FinTech, MedTech, and emerging technologies. She bridges complex ideas with clear, impactful storytelling.