AI Readiness in 2026: Is Your Organization Actually Prepared to Build and Scale AI?

Key Takeaways

- Gartner predicts organizations will abandon 60% of AI projects unsupported by AI-ready data through 2026. Readiness is the deciding factor between ROI and expensive write-off.

- Only 49% of companies are deeply transforming their business with AI, the rest are running surface-level experiments while competitors build structural advantages.

- More than 90% of organizations report no tangible EBIT impact from generative AI investments.

- Understanding what is AI readiness before commissioning any AI app development build is the single highest-ROI decision in the entire project lifecycle.

AI readiness is an organization’s measurable capacity to adopt, deploy, and scale artificial intelligence across its operations. It is assessed across seven dimensions: strategic alignment, data quality, technology infrastructure, people and skills, governance, culture, and adaptability. An organization is considered AI-ready when it can move from pilots to production systems without structural failure and sustain those systems over time.

Why AI Readiness Has Become the Question Every Leadership Team Is Asking

The investment numbers in 2026 are extraordinary and they tell a story that goes well beyond technology enthusiasm. Gartner estimates total worldwide AI spending will reach over $2 trillion in 2026, rising to $3.3 trillion by 2029. Inside that acceleration is an uncomfortable reality: organizations are spending at record levels while simultaneously failing to capture the value those investments are supposed to deliver.

MIT’s research found 95% of enterprise AI pilots delivered zero measurable P&L impact. The pattern is consistent enough to have a name: the AI readiness gap. It’s the distance between an organization that has access to AI tools and one that is structurally prepared to make them work. Every AI app development initiative, every engagement with an AI app development company in USA, and every internal AI build lands somewhere on that gap. Readiness determines which side you fall on.

Certain sources reports 23% of organizations are scaling agentic AI in at least one function, with another 39% actively experimenting but not yet scaling.

The gap between those experimenting and those scaling is, in almost every case, a readiness gap. Understanding what is AI readiness for your specific organization is the first step toward closing it.

What Is AI Readiness for Businesses

What is AI readiness, precisely, not in the abstract, but in the terms that matter for a CTO deciding whether to commission a build, a COO evaluating operational AI deployment, or a founder exploring AI business opportunities that require production-grade AI to realize.

AI readiness is the degree to which an organization has the strategic clarity, data infrastructure, technical capability, human skills, and governance frameworks required to deploy AI at scale. A company might have excellent cloud infrastructure but no unified data layer. Another might have enthusiastic leadership but no documented AI governance policy. Understanding which dimensions are the bottleneck is precisely what a readiness assessment reveals.

This distinction matters enormously when deciding whether to commission an AI app development project, bring in an AI powered mobile app development company like Tech Exactly, or build internal AI capability from scratch. Without a readiness baseline, those decisions are made blindly.

The Seven Dimensions That Determine Whether AI Works in Your Organization

Strategic Alignment: Is there a specific, measurable business problem AI is solving or just a general mandate to “do more with AI”?

Data Readiness: Is your data clean, centralized, consistently structured, and accessible enough for models to learn from reliably?

Technology Infrastructure: Can your existing tech stack support AI workloads: APIs, compute, integration layers, and observability tools?

People and Skills: Does your team have the AI literacy, data engineering depth, and change management capacity to operate AI in production?

Governance and Ethics: Do you have documented policies covering AI accountability, bias monitoring, data privacy, and compliance?

Culture and Change Readiness: Is your organization willing to adapt workflows around AI at the team level, where implementation actually happens?

Measurement and Adaptability: Can you define, measure, and iterate on AI outcomes with feedback loops built into your deployment process?

▶️Must read → How to Integrate AI into Your App: Full Guide

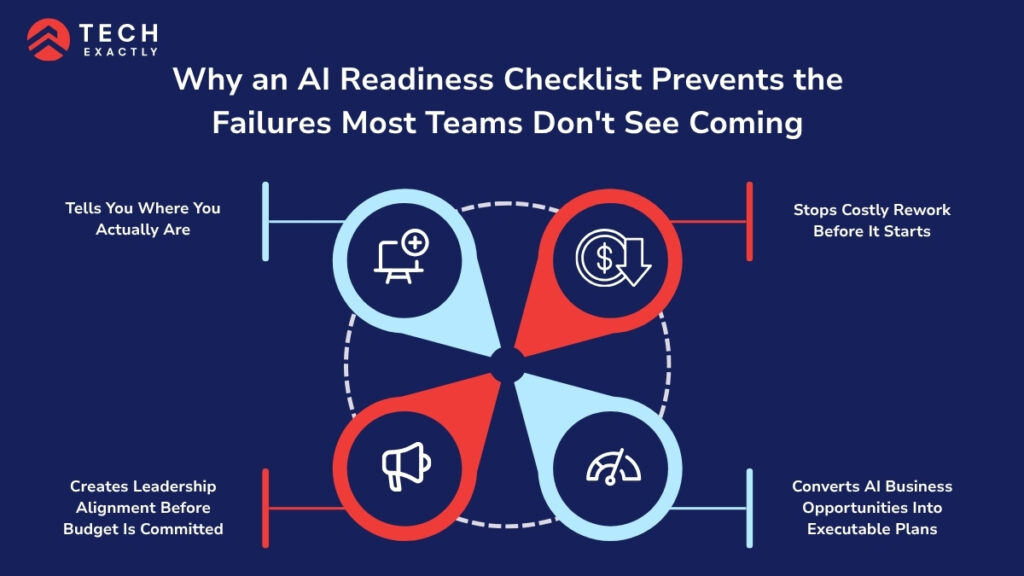

Why an AI Readiness Checklist Prevents the Failures Most Teams Don’t See Coming

Most organizations that struggle with AI aren’t struggling because they chose the wrong model or the wrong vendor. They’re struggling because they started building before they understood their own starting point. A readiness checklist surfaces the data gaps, skill deficits, and infrastructure weaknesses that derail projects later when they’re far more expensive to fix.

1. Tells You Where You Actually Are

63% of organizations either do not have or are unsure whether they have the right data management practices for AI. A readiness checklist forces an honest inventory that reveals these gaps before they become costly surprises mid-build.

We’ve seen this pattern at Tech Exactly. A client came to us wanting to build an AI-powered customer segmentation tool. Before scoping the build, we ran a data readiness audit. What we found: three years of customer records across four disconnected systems, no unified customer ID, and no documented data owner. The AI model we would have built on that data would have been wrong with confidence. The audit added two weeks. It saved the entire project and the client’s budget.

2. Stops Costly Rework Before It Starts

Building on unprepared infrastructure doesn’t just produce poor results; it creates technical debt that compounds with every subsequent AI layer. Most of such AI project failures trace back to data quality and infrastructure issues that were never assessed.

Organizations that buy AI from specialized vendors succeed at double the rate of those building internally, 67% versus 33%.

Choosing the right AI app development company in USA after a readiness assessment consistently produces better outcomes than choosing one before it.

3. Creates Leadership Alignment Before Budget Is Committed

AI initiatives without executive alignment don’t stall at the data layer. They stall at the budget review. A readiness assessment creates a shared vocabulary and picture of where the organization actually stands, replacing vague conversations about “investing in AI” with specific, evidence-based decisions about what needs to be addressed first.

4. Converts AI Business Opportunities Into Executable Plans

Only 34% of companies are deeply transforming their business with AI, while 37% are still operating at a surface level. The organizations converting AI business opportunities into measurable outcomes are the ones that understand their readiness level before committing to a build, not after discovering the gaps mid-project. Identifying the opportunity and being structurally ready to execute it are two very different things.

▶️You can read: How We Built a Scalable AI-Powered Nature Identification App for 1M+ Global Users

The AI Readiness Checklist: Seven Dimensions, One Honest Assessment

Use this before engaging any AI app development company in USA, commissioning an AI chatbot app development company, or beginning an internal build.

1. Strategy and Leadership Alignment

☐ Have we defined specific business problems AI is expected to solve?

☐ Is there a named executive sponsor with clear accountability?

☐ Do we have a documented AI strategy connected to measurable business outcomes?

☐ Has leadership aligned on what success looks like before the build begins?

2. Data Readiness

☐ Is our data centralized or accessible from a single integration layer?

☐ Have we assessed data quality – completeness, consistency, recency?

☐ Do we have a documented data governance policy with clear ownership?

☐ Have we identified which datasets are AI-ready and which need remediation?

3. Technology and Infrastructure

☐ Do our core systems have modern APIs available for AI integration?

☐ Is our cloud infrastructure scalable enough for AI inference workloads?

☐ Have we evaluated integration complexity between existing systems and AI services?

☐ Do we have observability tools capable of monitoring AI outputs in production?

4. People and Skills

☐ Have we assessed AI literacy across the teams that will use the system?

☐ Do we have data engineering capability in-house or through a partner?

☐ Have we identified skill gaps and a plan to address them before deployment?

☐ Is there a change management plan for the teams most affected?

5. Governance and Risk

☐ Do we have a documented AI governance framework?

☐ Have we defined bias monitoring and model review protocols?

☐ Is there a clear incident response plan for AI system failures?

☐ Have we mapped AI use cases to applicable regulatory requirements?

6. Culture and Change Readiness

☐ Has leadership communicated a clear, honest narrative about why AI is being adopted?

☐ Are there forums for teams to raise concerns about AI adoption?

☐ Have we addressed the job displacement concern directly and publicly?

☐ Is there a plan for recognizing and rewarding AI adoption at the team level?

7. Measurement and Adaptability

☐ Have we defined success metrics for our AI initiative before launch?

☐ Is there a feedback loop for capturing real-world model performance data?

☐ Do we have a scheduled review cadence for AI system outputs?

☐ Is the team empowered to iterate and improve based on what the data shows?

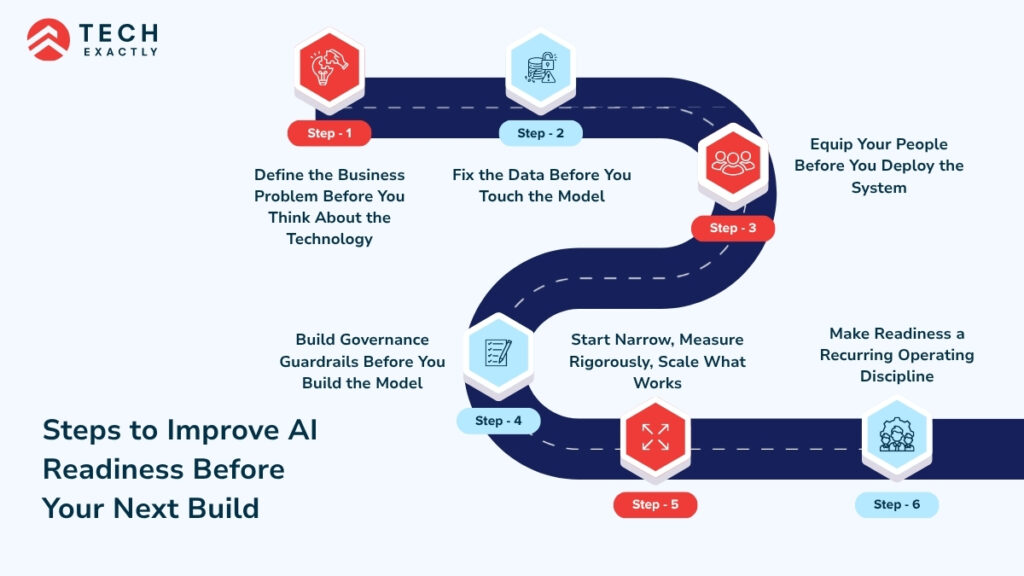

Steps to Improve AI Readiness Before Your Next Build

Step 1: Define the Business Problem Before You Think About the Technology

Before evaluating any AI tool, researching how to build an AI model for your use case, or engaging an AI chatbot development company, your leadership team needs to agree on the specific problem being solved. Vague goals – “we want to be more innovative,” produce vague AI systems. Specific goals – “We want to reduce customer support resolution time by 60% using automated tier-1 handling,” produce systems you can measure, improve, and scale.

Step 2: Fix the Data Before You Touch the Model

The most common reason AI projects fail is the data feeding it. Conduct a full data audit before beginning any AI app development build: what do you have, where does it live, how consistent is it, and who owns it? This is unglamorous work. It is also the work that determines whether your AI system produces insights or produces noise. Whether you’re building internally or working with an AI powered mobile app development company, the data foundation must come first.

Step 3: Equip Your People Before You Deploy the System

Technology readiness and people readiness are not the same thing. You can have the most sophisticated AI infrastructure in your sector and still fail if the people who need to use, interpret, and maintain AI outputs aren’t equipped to do so. 53% of sales reps are still in the dark on how to extract value from the AI tools they already have. Invest in AI literacy training before deployment, not as an afterthought once the system is live.

Step 4: Build Governance Guardrails Before You Build the Model

Governance frameworks: bias monitoring, model versioning, human oversight protocols, and incident response need to be in place before the first model goes into production. This is especially critical when working with an AI chatbot app development company deploying customer-facing AI, or building a mobile payments application with AI-driven fraud detection, where errors have immediate reputational and regulatory consequences.

Document your model versioning approach before the first deployment.

Define what “failure” looks like for your AI system and who is accountable when it happens.

Build bias monitoring into the architecture from day one.

Step 5: Start Narrow, Measure Rigorously, Scale What Works

The organizations that scale AI successfully don’t try to transform everything simultaneously. They identify one high-impact, well-scoped use case, deploy it carefully, measure the outcomes rigorously, and use those learnings to inform the next build. Whether that’s an AI app development project for internal operations, a mobile payments application with AI-powered fraud detection, or a customer-facing build with an AI chatbot development company, start narrow, prove the value, then expand.

Step 6: Make Readiness a Recurring Operating Discipline

The organizations moving fastest in AI aren’t the ones that completed a readiness assessment once. They’re the ones that made readiness a continuous habit: quarterly reviews, ongoing data governance, and iterative skills development. Gartner predicts agentic AI could drive approximately 30% of enterprise application software revenue by 2035. Capturing that trajectory requires building readiness as a sustained practice today.

Related reading → Generative AI in Healthcare: Use Cases, Benefits & What Founders Need to Know

What Quietly Kills AI Readiness in Practice

The most dangerous AI readiness failures aren’t dramatic. They accumulate slowly until a project is too far along to course-correct without high cost.

Chasing the opportunity before establishing the foundation. The AI business opportunities available in 2026 are real: autonomous agents, AI-powered mobile payments application fraud detection, intelligent customer support through an AI chatbot app development company, personalized mobile experiences delivered by an AI powered mobile app development company. But identifying the opportunity and being structurally ready to execute it are two entirely different things. Organizations that conflate the two commit resources before they have the infrastructure, data, or governance to deliver.

Deploying without measuring. An AI system deployed without defined success metrics will drift. Without a feedback loop, you can’t tell whether the model is performing well, degrading over time, or producing biased outputs in specific edge cases. More than 80% of organizations report no tangible EBIT impact from generative AI investments and the primary driver is deploying AI without the measurement infrastructure to know whether it’s working.

Selecting an app developer for hire before conducting a readiness assessment. Engaging an AI app developer for hire or an external AI development partner before you understand your data quality, infrastructure constraints, and governance requirements means the partner is scoping a build they don’t fully understand. The result is almost always a project that starts smoothly and runs into expensive structural problems in the second half.

Skipping the parallel run phase before going fully live on an AI system.

Assuming that access to AI tools automatically produces adoption of AI tools.

Treating data governance as an IT responsibility rather than a business-wide discipline.

Related reading → How SMBs Can Integrate AI into Existing Software Systems

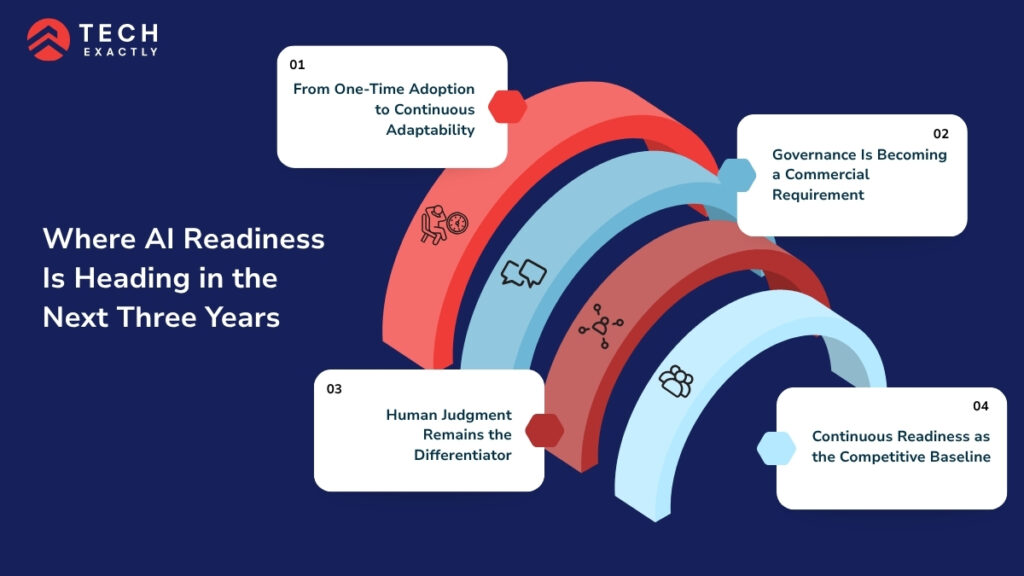

Where AI Readiness Is Heading in the Next Three Years

From One-Time Adoption to Continuous Adaptability

The question in 2023 was “Should we adopt AI?” The question in 2026 is “how do we adapt fast enough?” Readiness in this environment isn’t about getting prepared once, it’s about building the organizational muscle to absorb and integrate AI capability continuously as it evolves.

Governance Is Becoming a Commercial Requirement

Responsible AI, explainability, bias monitoring, human oversight, and data provenance are moving from ethical aspiration to commercial and legal requirement. Customers, regulators, and enterprise buyers are increasingly making vendor decisions based on AI governance posture alongside AI capability. Organizations that build governance infrastructure now will have a meaningful advantage as this requirement tightens.

Human Judgment Remains the Differentiator

The organizations winning with AI in 2026 are not the ones with the most automated workflows. They’re the ones that have identified where human judgment belongs alongside machine capability. Designing AI systems that amplify expertise rather than attempt to replace it. Whether you’re working with an AI chatbot development company on customer-facing conversational AI or building a complex internal automation through an AI app development company in USA, the architecture decisions that define where humans stay in the loop are increasingly the decisions that determine whether users trust and adopt the system.

Continuous Readiness as the Competitive Baseline

The organizations that lead in the AI era aren’t those who complete a readiness assessment and declare themselves done. They’re the ones that make readiness a permanent operating discipline: quarterly reviews, continuous data governance, iterative skills development built into the rhythm of how they work. That discipline is what separates the 34% deeply transforming their business from the 37% still experimenting at the surface.

Choosing the Right AI Development Partner for Your Readiness Journey

When evaluating an AI chatbot app development company, an AI app development company in USA, or any app developer for hire for an AI initiative, the most important signal is how they start the engagement. Do they begin with your readiness or with their product?

The right partner conducts a data audit before scoping the build. They ask about your governance framework before recommending a model. They challenge your timeline if your data isn’t ready to support it. Whether you’re exploring AI business opportunities in a new vertical, building a mobile payments application with real-time AI fraud detection, learning how to build an AI model for your specific use case, or engaging a specialist AI powered mobile app development company for your first production deployment, the starting point is always an honest assessment of where you actually are.

At Tech Exactly, every AI engagement begins with a readiness assessment before a single line of code is written. We’ve helped organizations across healthcare, FinTech, retail, and logistics build AI app development programs that scale, because we treat data architecture, infrastructure integration, and governance design as product requirements, not afterthoughts.

If you want an honest assessment of your AI readiness before committing budget to a build,let’s start with that conversation.

Let's Start Your Project Today

Ready to build your App with us? Reach out now – our experts are just one click away.

Frequently Asked Questions

What is AI readiness in practical terms: it's your organization's measured ability to deploy and scale AI without structural failure across seven dimensions – strategy, data, infrastructure, people, governance, culture, and measurement. It matters because more than 80% of organizations report no tangible EBIT impact from generative AI investments and the gap between those capturing value and those writing off pilots is almost entirely explained by readiness, not by technology.

Assess the seven core dimensions using the checklist in this guide. If you can't clearly answer who owns your data, what specific problem AI is solving, or how you'll measure success, you're not yet ready to commission an AI app development build. Run the assessment first. The two to four weeks it takes will save months of expensive rework.

An app developer for hire or an external partner when you need specialist skills quickly, are building outside your core technical competency, or are scaling faster than internal hiring allows. Build internally when the capability is your core competitive differentiator and requires deep institutional knowledge over time. Most organizations benefit from a hybrid: internal ownership of strategy and data, external partnership for specialized AI app development execution.

An AI chatbot development company worth working with starts by understanding your specific use case, your existing tech stack, and your data quality before recommending a solution. Look for experience with production conversational AI: context management, multi-turn memory, fallback routing, and CRM integration. A chatbot that works in a demo but degrades in production is the most common failure mode in this category.

Identifying an AI business opportunity, a new revenue stream, a competitive use case, an operational efficiency is the starting point. Readiness determines whether you can execute it. The organizations consistently converting AI business opportunities into measurable outcomes are the ones that assess and address readiness gaps before committing resources to the build, not after discovering them mid-project.

Pallabi Mahanta, Senior Content Writer at Tech Exactly, has over 5 years of experience in crafting marketing content strategies across FinTech, MedTech, and emerging technologies. She bridges complex ideas with clear, impactful storytelling.