AI Chatbot for Healthcare: Is It Worth Building One in 2026?

52% of US patients are already using chatbots for health information. But only 19% of medical practices have actually deployed one.

This gap will make you realise two things. Patients are ready. Most practices aren’t.

And the ones who are sitting on the sidelines aren’t wrong to hesitate. ECRI named misuse of AI chatbots the #1 health technology hazard for 2026. Babylon Health, once valued at $4.2 billion, went bankrupt after its chatbot missed heart attack symptoms. Woebot, with 1.5 million users and $123 million in funding, shut down its consumer app because FDA compliance proved too expensive.

So, is building an AI chatbot for healthcare worth it? As a healthcare app development company that’s built compliant AI systems for clinics and health tech startups, our honest answer: it all depends entirely on what you’re building it to do.

The gap exists for a huge reason. The practices that are holding off on the chatbots are not really stuck in the past; all they are doing is just watching from the sidelines. They saw what really happened when Babylon Health collapsed, they watched Woebot shut down their consumer app, and they have been reading the ECRI reports.

This is not just about fear of new technology; it is simply pattern recognition.

At this point, no one is really debating whether chatbots can work in healthcare anymore, as there are enough examples that prove they do. The real struggle is figuring out exactly which one’s work and which specific workflows they really belong in, and what it really takes to create something that does not just create a brand-new set of headaches.

In this guide, we are going to explore exactly where healthcare AI chatbots actually deliver a measurable ROI, and where the tech is still risky enough to touch. We are going to dive into the compliance realities that most vendors quite conveniently ignore, what it genuinely costs to create a chatbot the right way, and how you can figure out if you should be building your own or just buying an off-the-shelf solution.

Benefits of AI Chatbots in Healthcare: Use Cases That Actually Deliver

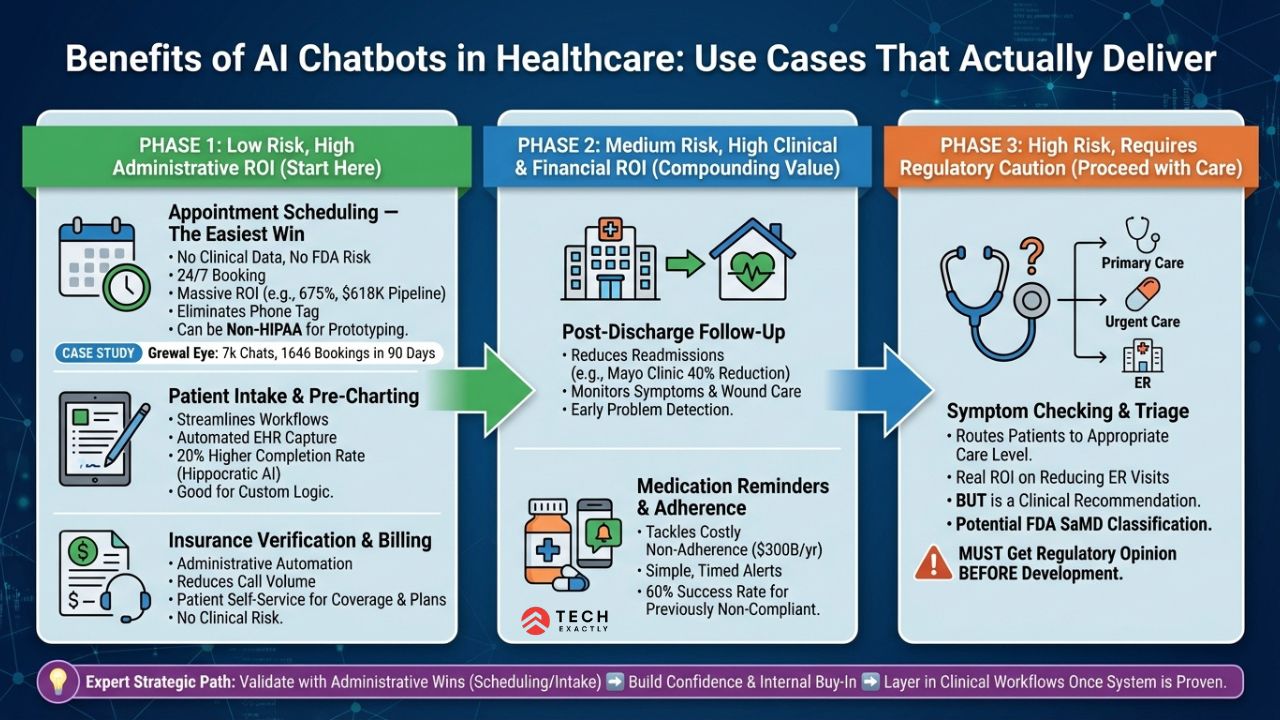

It is important to understand that not every use case is going to deliver the same results. Some will generate a clear, measurable ROI within a matter of months, while others could end up creating more liability than the real value. Here is exactly what the data reveals about each path.

Appointment Scheduling — The Easiest Win

When it comes to AI chatbots, this specific use case makes them shine the most with the least amount of risk involved. You are not involving yourself with any clinical data, nobody is making a diagnostic decision, and you get to completely avoid those tricky FDA gray zones. It all just comes down to simple, straightforward calendar management.

Grewal Eye Institute deployed a WhatsApp chatbot that handled 7,000+ chats in 90 days, booked 1,646 appointments, and generated $618K in pipeline revenue — a 675% ROI. A multi-location clinic chain eliminated 4 hours of daily phone work per receptionist across 12 locations.

The appeal is pretty obvious over here: patients prefer booking online or via chat over calling — the same shift driving doctor appointment app development across the industry. And 78% of physicians view chatbots positively for scheduling tasks — rare consensus in a profession that’s skeptical of most AI claims.

The real reason scheduling is the safest place to begin isn’t just because of the return on investment; it’s the lack of regulatory friction. If the scheduling chatbot does not touch or store Protected Health Information, it sits completely outside of HIPAA. You are not going to need a BAA, you bypass audit logging requirements, and you aren’t hit with encryption mandates.

This is going to allow you to prototype, test, and validate the technology without ever touching the patient data. It is a massive strategic advantage when you are trying to secure stakeholder buy-in before funding a fully HIPAA-compliant build

Patient Intake and Pre-Charting — High ROI, Low Risk

The traditional pre-visit intake forms are a huge time sink for both your staff and patients. An AI chatbot can handle this upfront, walking patients through their medical history, insurance verification, and symptoms before they step in. This streamlines the whole process and saves time for everyone involved.

This is also where custom development starts to make sense. Off-the-shelf tools are built for generic use cases, but most practices aren’t generic. If you have specialty-specific intake logic, custom EHR fields, or unique screening workflows, you’ll need a solution built around them.

Post-Discharge Follow-Up — Where the ROI Compounds

The financial burden of hospital readmissions on the US healthcare system currently exceeds $26 billion annually. When you implement an AI chatbot to conduct post-discharge check-ins that specifically focus on medication adherence, continuous symptom monitoring, and wound care instructions, it also empowers your practice to catch critical problems early, while effectively stopping complications before they ever turn into an ER visit.

Mayo Clinic achieved a 40% reduction in hospital readmissions using AI-powered remote monitoring. Hippocratic AI reported a 30% readmission reduction and 360% increase in team capacity for chronic care management, with staff saving roughly 4,000 hours per month.

Medication Reminders and Adherence

Non-adherence costs the US healthcare system an estimated $300 billion per year. AI chatbots that send timed reminders and track compliance are simple to build and measurably effective.

Insurance Verification and Billing Questions

AI chatbots that verify coverage, explain plan details, and handle prior authorization paperwork reduce billing department call volume significantly. This is administrative automation, so there is going to be no clinical risk and clear cost savings. Plus, patients prefer self-service for billing questions anyway.

It is important to recognize that symptom checking comes into an entirely different category than the previous use cases, and it demands a clear understanding prior to development.

A chatbot must be designed to triage patients by asking them questions about symptoms and routing them to appropriate care levels, such as primary care, urgent care, Telehealth, or the ER. It can very easily reduce the unnecessary emergency room visits while improving the appropriateness of care. The ROI on that is genuine.

There is also a regulatory risk involved here. Whenever a symptom checker influences where a patient is going for care, it’s making a clinical recommendation.

Depending on its design and how the output is applied, the FDA might actually classify it as a software as a medical device. This doesn’t mean you shouldn’t build it. Instead, it means that you absolutely need to get a regulatory opinion before you even start the development, not after the fact.

💡 Expert Tip: You need to start tart with scheduling and intake. These two use cases deliver the quickest ROI, carry the least compliance burden, and build internal confidence before you expand into clinical workflows. Every successful healthcare chatbot deployment we have built follows this sequence: start administrative, then layer in clinical once you’ve validated the system.

Let's Start Your Project Today

Ready to build your AI Healthcare Chatbots with us? Reach out now – our experts are just one click away.

Where AI Healthcare Chatbots Create More Problems Than They Solve

This is the part that a lot of vendors often skip. But if you’re investing real money, you need to know where the technology breaks.

Clinical Diagnosis — Not Ready

The numbers are sobering. In pediatric studies, AI chatbots had a misdiagnosis rate of over 80%. Google’s Med-Gemini hallucinated a nonexistent brain structure in published research.

To address this, enterprise-grade healthcare chatbots use Retrieval-Augmented Generation (RAG). This approach will anchor the AI’s responses in your clinic’s verified medical protocols and proprietary data. It significantly lowers the risk of unsafe or misleading clinical output.

The technology isn’t there yet for independent diagnostic decisions. AI can assist clinicians while surfacing relevant data, flagging patterns, and suggesting differentials, but replacing clinical judgment? That is definitely not happening in 2026.

Emergency Triage — Too High-Stakes

When someone is having a cardiac event, a chatbot asking “on a scale of 1-10, how would you describe your chest pain?” isn’t helpful. It’s dangerous. Babylon Health’s chatbot missed heart attack symptoms in documented cases.

Emergencies require human judgment.

Mental Health Therapy — The Regulatory Minefield

Stanford research shows AI therapy chatbots can introduce biases and failures with “dangerous consequences”. No AI chatbot has been FDA-approved to diagnose, treat, or cure a mental health disorder as of 2026.

If your chatbot crosses the line from “wellness support” to “therapeutic intervention,” you’re in the FDA’s SaMD territory without a clear path forward. And building such regulatory-compliant apps will double or triple your budget and time.

⚠️ Reality Check: FDA oversight is a nightmare. Any chatbot that is making clinical recommendations, providing diagnoses, or substituting for a provider risks crossing into regulated territory. You must stick with administrative tasks and only build clinical features with expert regulatory guidance.

AI Chatbot for Healthcare: The Compliance Layer You Can't Skip

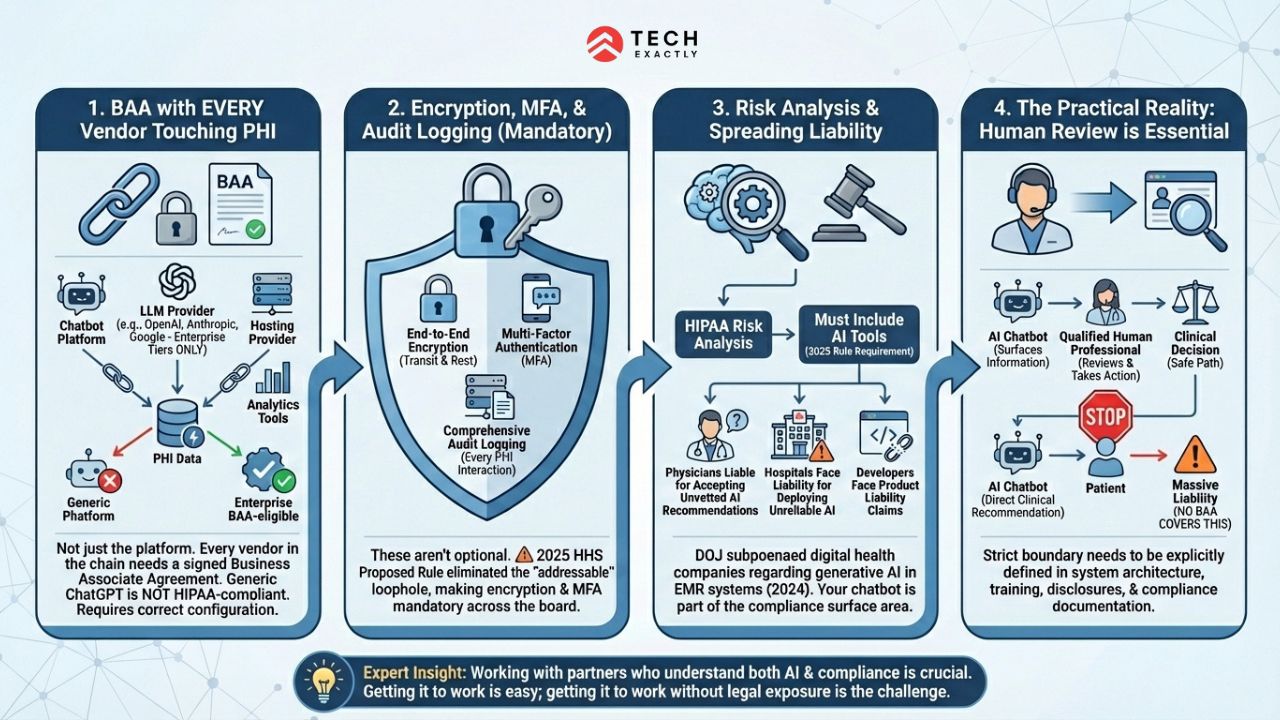

Any AI chatbot handling patient data triggers HIPAA requirements. There’s no shortcut here.

BAA with every vendor touching PHI. Your chatbot platform, your LLM provider, your hosting provider, your analytics tools — every vendor in the chain needs a signed Business Associate Agreement. Generic ChatGPT is not HIPAA–compliant. Enterprise tiers from OpenAI, Anthropic, and Google offer BAA-eligible versions, but you need to configure them correctly.

Encryption, access controls, and audit logging. End-to-end encryption in transit and at rest. Multi-factor authentication for any admin access. Comprehensive audit logging of every interaction involving PHI. These aren’t optional — and the 2025 HHS rules eliminated the “addressable” loophole, making encryption and MFA mandatory across the board.

Risk analysis must include AI. The 2025 regulation requires entities to include AI tools in their HIPAA risk analysis and risk management activities. Your chatbot isn’t a standalone tool — it’s part of your compliance surface area.

Liability is spreading. Physicians are liable for blindly accepting AI recommendations. Hospitals face liability for deploying unreliable AI without vetting. And increasingly, software developers face product liability claims for AI malfunctions. In 2024, the DOJ subpoenaed several digital health companies regarding generative AI use in EMR systems.

This is why working with a healthcare app development company that understands both AI and compliance matters. Getting the chatbot to work is the easy part. Getting it to work without creating legal exposure is where most teams stumble.

The practical reality for any healthcare organization would be to launch a chatbot. If the AI’s output is going to influence a clinical decision, then you absolutely have to build a human review step directly into your workflow.

This cannot be treated as an optional feature. The division of labor needs to be clear, as the chatbot surfaces the necessary information and a qualified human professional takes action based on it. This strict boundary needs to be explicitly defined within your system architecture, staff training programs, patient disclosures, and compliance documentation.

If your organization blurs that line and lets an AI give direct clinical recommendations to patients without a human first checking it, then you’re exposing yourself to massive liability that no BAA is ever going to cover.

Let's Start Your Project Today

Ready to build your AI Healthcare Chatbots with us? Reach out now – our experts are just one click away.

How Much Does an AI Chatbot for Healthcare Cost to Build?

The range is wide because “healthcare chatbot” covers everything from a scheduling FAQ bot to a clinical decision support system.

| Approach | Cost | Best For |

|---|---|---|

| No-code MVP (Voiceflow + GPT-4o-mini) | $0–$200 upfront, $80–$375/mo | Proof of concept, FAQ bots, non-PHI use cases |

| Platform + custom dev | $10,000–$50,000 | Multi-workflow bots — scheduling, intake, insurance verification |

| Full custom HIPAA-compliant | $50,000–$150,000+ | EHR-integrated bots handling PHI, clinical workflows |

The biggest cost multiplier isn’t the AI — it’s HIPAA compliance and EHR integration. A scheduling chatbot that doesn’t touch PHI? You can prototype that in a week with Voiceflow. A chatbot that pulls patient records from Epic, handles insurance verification, and logs interactions to the EHR? That’s a custom development project with compliance architecture, penetration testing, and ongoing maintenance.

You need to keep in mind the financial risk of non-compliance before evaluating build costs. Improperly configured chatbots risk HIPAA fines up to $50,000 per violation. A prolonged PHI leak will eventually multiply this exponentially. Therefore, investing in a compliant build is essential insurance; it is not just operational overhead.

Ongoing costs to budget for:

- LLM API usage: $20–$750/mo depending on model and conversation volume

- Platform fees: $60–$500/mo

- HIPAA compliance maintenance: $10,000–$60,000/year (risk assessments, audits, policy updates)

- Annual software maintenance: 20–30% of initial build cost

💡 Expert Tip: Before you initiate building anything custom from scratch, you should try running a quick two-week project while using a simple no-code tool for a strictly non-PHI use case, like general clinic information or appointment FAQs. This will allow you to accurately measure call deflection and overall patient engagement.

If the results tend to be positive, then you can safely invest in a fully HIPAA-compliant custom development tailored to the workflows that really drive value. If the numbers eventually fall flat, you’ve only really spent about $200 learning that lesson, rather than blowing $50,000 on a custom build.

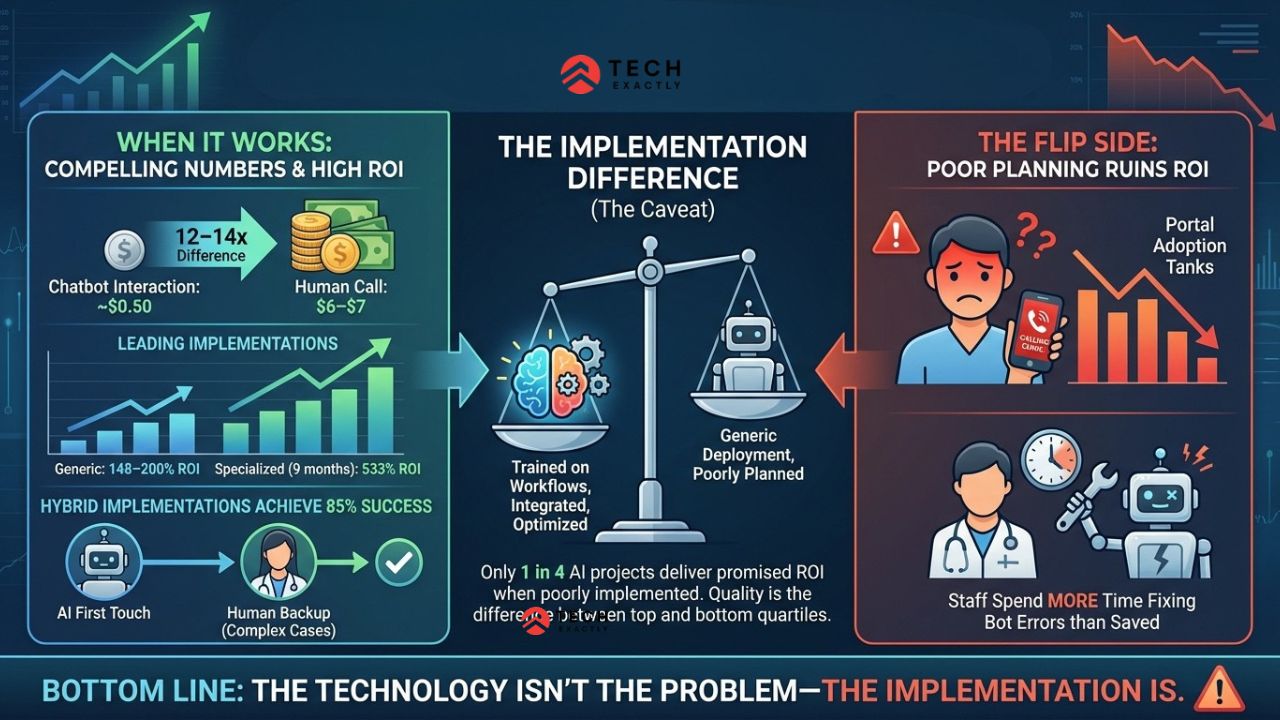

AI Chatbot ROI in Healthcare: Does It Actually Pay Off?

When it works, the numbers are impressive. A chatbot interaction costs roughly $0.50 compared to $6–$7 for a human call. That’s a 12–14x difference. Leading implementations report 148–200% ROI, with specialized deployments hitting 533% within 9 months.

But here’s the caveat IBM won’t put in their pitch deck: only 1 in 4 AI projects deliver promised ROI when poorly implemented. The difference between the top quartile and the bottom three? Implementation quality. A chatbot that’s been trained on your specific workflows, integrated with your systems, and continuously optimized will outperform a generic deployment every time.

Hybrid implementations — AI handling the first touch with human backup for complex cases — achieve a higher success rate compared to fully automated deployments.

But how about we take a look at the flip side? Poorly planned chatbots actually ruin the ROI. When the bots fail, frustrated patients can just call the clinic anyway, tanking portal adoption. Staff often end up spending more time fixing bot errors than the tech originally saved. Bottom line: the technology isn’t the problem—the implementation is.

The Bottom Line

An AI chatbot for healthcare is worth building — if you build the right thing.

Start with administrative workflows where the ROI is proven, and the compliance burden is light. Scheduling, intake, insurance verification, and post-discharge check-ins. These use cases pay for themselves and carry minimal clinical risk.

Rolling this out in phases is usually your safest bet. You need to start with phase one: administrative tasks such as scheduling, intake, and insurance verification. These will be your quick wins as they carry the lowest compliance headaches, deliver the quickest ROI, and give you the hard data you need to justify expanding later.

In Phase Two, you could start layering in clinical support, like post-discharge follow-ups and medication reminders, just making sure you have solid compliance and human oversight at every step.

Stay away from independent diagnosis, unsupervised therapy, and anything that substitutes for clinical judgment. The technology will get there. The regulations will catch up. But in 2026, the companies winning with healthcare AI are the ones augmenting their clinical teams, not replacing them.

If you’re ready to build, we can help you scope the right chatbot for your practice — from a $10K intake bot to a full EHR-integrated clinical workflow. The first step is figuring out what’s worth automating and what isn’t.

Let's Start Your Project Today

Ready to build your Voice AI setup with us? Reach out now – our experts are just one click away.

FAQ on AI Chatbot for Healthcare

If you are going to stick to the administrative tasks like scheduling or intake, absolutely—the ROI is proven. But for clinical diagnosis or therapy? The tech and regulations in 2026 simply are not ready yet to justify the risk.

A non-PHI scheduling bot can be prototyped for under $500. A HIPAA-compliant chatbot with EHR integration runs $50,000–$150,000+ for the initial build, plus $2,000–$5,000/month in ongoing costs. The compliance layer is what drives the cost, not the AI.

To be clear, free and consumer-grade versions are not really HIPAA-compliant. OpenAI’s Enterprise and API tiers do offer BAA-eligible access, but true compliance is going to require proper configuration on your end. You need to implement strict encryption, access controls, audit logging, and execute a signed BAA. This identical framework will apply whether you are integrating OpenAI, Claude, or Google's Gemini

General wellness chatbots (scheduling, reminders, general health information) are not considered medical devices. But if your chatbot diagnoses, treats, or provides clinical recommendations, it may fall under the FDA's SaMD framework. The line is blurry and getting stricter.

There is a reason ECRI just named AI chatbot misuse the biggest health tech hazard for 2026. The real massive threats are bots stepping out of line to imply diagnoses, unsecured pipelines leaking PHI, and shattered patient trust. Keep in mind that if a bot gives you bad advice, then the patients are not really going to blame the software; they will be blaming your practice.

For basic use cases with standard workflows, buy. For anything involving PHI, custom clinical workflows, or EHR integration, build custom. We covered this in depth in our build vs buy guide for healthcare software.

Manas Das, Mobile App Architect at Tech Exactly, has over 9 years of experience leading teams in iOS, Android, and cross-platform development. He specialises in scalable app architecture and GenAI-driven mobile innovation.