How to Audit Your AI System Before Production: A CTO’s Checklist for Accuracy, Security, and Compliance

Key Takeaways:

- Over 80% of AI projects fail to deliver meaningful production value, twice the failure rate of traditional IT projects, and most failures are preventable with a structured pre-launch audit.

- Only 48% of AI projects make it into production, and it takes an average of 8 months to go from prototype to production

- The EU AI Act (August 2026 enforcement) introduces fines of up to 7% of global annual turnover for non-compliant high-risk AI systems, ai audit readiness is now a legal obligation.

- Systems that worked perfectly in demos began failing in production, outputs were inconsistent across similar inputs, and teams could not explain why the model behaved differently day to day.

- AI auditing isn’t a one-time gate before launch; it’s a continuous governance function embedded in how your product operates after it ships.

We’ve seen this pattern play out more times than we’d like to admit at Tech Exactly and across the industry. A team builds something that looks genuinely impressive in controlled conditions. The demo works. The stakeholders are confident. The timeline is on track. Then the system hits real users, real data, and real edge cases, and something quietly starts to break.

It’s not always dramatic. Sometimes it’s subtle: outputs that were accurate during testing drift slightly as production data distributions shift. Sometimes it’s expensive: token costs that looked manageable in a pilot spiral unpredictably at scale. And sometimes it’s catastrophic: a healthcare AI that recommends a wrong treatment path, a financial AI that flags legitimate transactions as fraud, a customer-facing chatbot that leaks information it shouldn’t have access to.

MIT’s 2025 GenAI Divide research found that 95% of organizations saw zero measurable return from generative AI. This failure traces back to the absence of a structured evaluation process before the system went live.

That’s exactly what this blog addresses. As an AI app development company in USA that has shipped production AI across healthcare, FinTech, and SaaS, we’ve developed a pre-production audit checklist that we run on every AI system before it touches real users. Here’s how it works, and why each step matters more than most teams realize.

What Is an AI Audit and Why Does It Matter Before Production?

An AI audit is a structured, systematic evaluation of an AI system’s data quality, model behavior, infrastructure, security posture, and governance controls performed before the system is exposed to production traffic. It translates the abstract concept of “readiness” into verifiable, documented criteria that are either passed or failed.

How to audit AI correctly means going beyond technical performance benchmarks. It means asking whether the system behaves reliably across the full distribution of real-world inputs, including the inputs your test set never included. It means verifying that every data access event is logged, that every model output is traceable back to its source, and that when something goes wrong, your team has the visibility to understand what happened and why.

The regulatory context makes this even more urgent. The EU AI Act begins enforcement in August 2026. California’s AI transparency rules took effect in January 2026. SOC 2 auditors are now routinely asking about AI usage controls. For CTOs, AI auditing is a must-have compliance requirement for any organization deploying AI in a commercial context.

Does Your Training Data Actually Reflect the Real World Your Model Will Operate In?

This is the question we ask first on every engagement, and it’s the one most teams answer incorrectly because they confuse “we have data” with “we have the right data.”

Gartner reports that 30% of AI projects fail due to poor data quality or lack of relevant data.

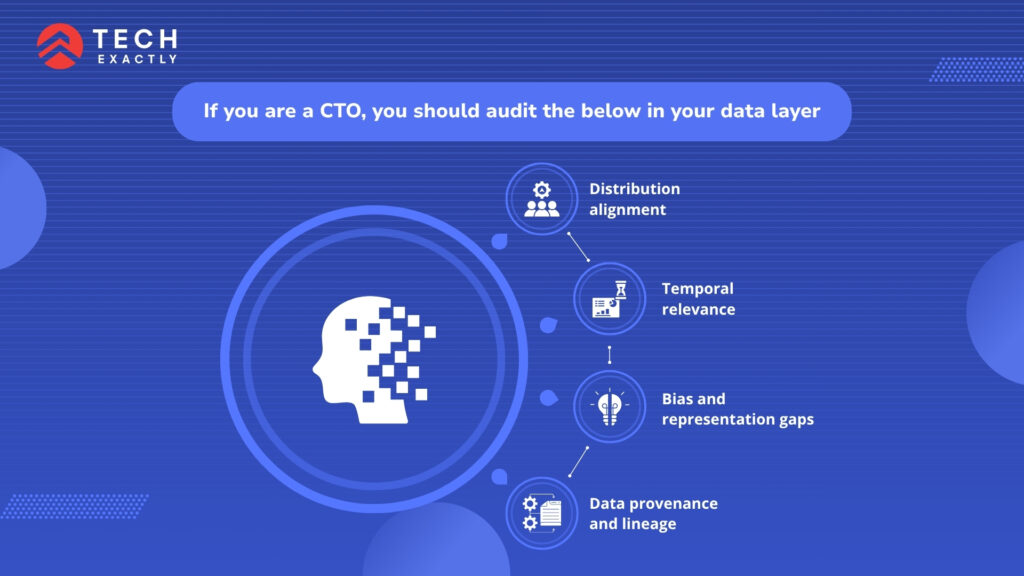

If you are a CTO, you should audit the following in your data layer:

Distribution alignment: Does your training data reflect the actual distribution of inputs your production system will receive? Models trained on curated datasets consistently underperform on real-world data that contains noise, edge cases, and formats the training pipeline has never encountered.

Temporal relevance: When was the training data collected? Models trained on data from 18 months ago may have learned patterns that no longer hold, particularly in fast-moving domains like financial fraud, clinical guidelines, or customer behavior.

Bias and representation gaps: Are specific demographic groups, geographies, or use cases underrepresented in your training data? Gaps here produce systematically worse performance for certain user segments.

Data provenance and lineage: Can you trace every data point in your training set back to its source, verify its collection method, and confirm it was obtained with appropriate consent? In regulated industries, this is a legal requirement.

Insight from Tech Exactly: When we were building an AI integration for an SMB client, we discovered midway through the project that their product database had 40% duplicate entries and inconsistent category naming across four years of manual imports. The AI recommendations were confidently wrong because the data feeding them was a mess. We stopped, audited everything, and fixed the foundation before writing another line of model code. That decision added two weeks and saved the entire project.

Is Your Model Actually Doing What You Think It’s Doing at Production Scale?

Model validation during development happens under controlled conditions. Production doesn’t care about controlled conditions. This section of the artificial intelligence audit is about verifying model behavior under conditions that more closely resemble the real world.

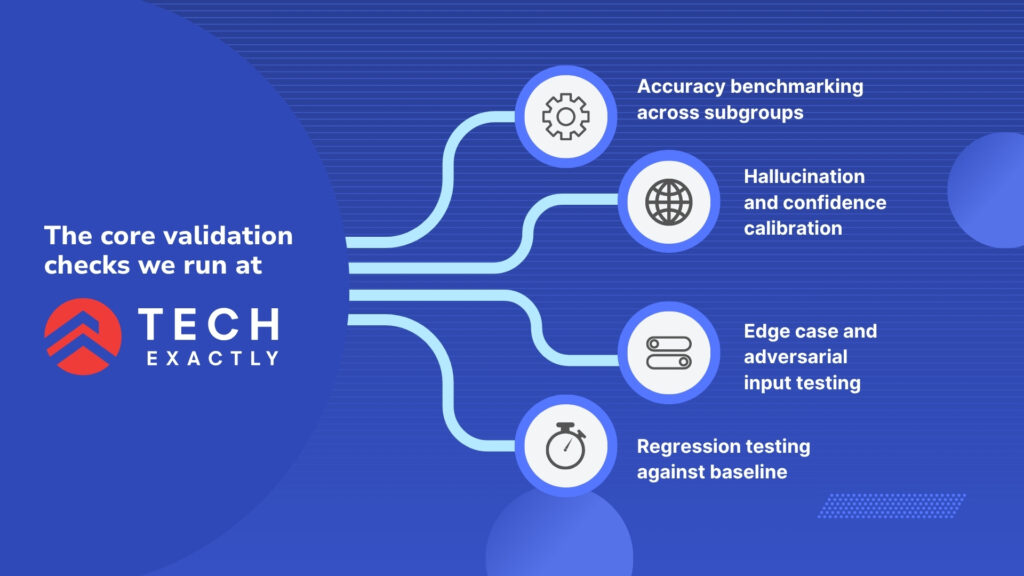

The core validation checks we run at Tech Exactly:

Accuracy benchmarking across subgroups:

Overall accuracy metrics hide performance gaps across specific input types, user groups, or contexts. Audit accuracy separately for each meaningful subgroup and set minimum acceptable thresholds before launch (not after a user complaint surfaces the gap).

Hallucination and confidence calibration:

For generative AI systems, does the model’s expressed confidence correlate with its actual accuracy? A model that says “I’m not sure” when it’s uncertain is safer than one that produces confident, authoritative outputs regardless of actual reliability.

Edge case and adversarial input testing:

What happens when the model receives inputs it was never trained on? What happens when a user deliberately tries to manipulate the output? Prompt injection, data poisoning, and adversarial inputs are documented attack vectors that any AI chatbot development company or AI powered mobile app development company must test against before production.

Regression testing against baseline:

If this AI system is replacing or augmenting an existing process, benchmark its outputs against the baseline. “Better than random” isn’t good enough; you need to know whether it’s better than what users currently rely on.

Can Your Infrastructure Handle Production Traffic

We’ve watched teams optimize their model for weeks and then spend months firefighting infrastructure failures they never tested for. The artificial intelligence audit must include a load and performance evaluation that treats production conditions as the baseline.

Infrastructure audit checklist:

- Latency under load: What is your p95 and p99 inference latency at expected production traffic volumes? Users expect AI responses in under two seconds. Exceeding that consistently destroys adoption.

- Failure mode behavior: What happens when the model API goes down? When does the vector database time out? When does the inference queue back up? Does the system fail gracefully with a sensible fallback, or does it fail silently and produce incorrect outputs?

- Scalability ceiling: What is the maximum concurrent request volume your current architecture can handle before performance degrades? Have you tested beyond that ceiling to understand the failure mode?

- Cost modeling at scale: Token costs, inference compute, and vector database queries that look manageable in a pilot can multiply unpredictably in production. Run cost projections at 10x, 50x, and 100x current volume before launch.

Related reading → How to Build AI-Powered SaaS Applications

Is Your AI System Secure Against the Threats Specific to AI

Standard OWASP security practices are necessary but not sufficient for AI systems. There’s a specific threat model that applies to LLM-based and AI-driven applications that most security checklists don’t cover and that every AI app development company in USA working in production needs to address before launch.

The OWASP LLM Top 10 2025/2026 is the definitive industry standard for identifying and mitigating the most critical security vulnerabilities in LLM applications, including prompt injection, data leakage, and excessive agency.

AI-specific security checks:

Prompt injection defense: Can a user manipulate the system prompt through carefully crafted inputs to override intended behavior, access restricted information, or cause the model to perform actions outside its intended scope? This is the most commonly exploited AI vulnerability in production systems today.

Data exfiltration via model outputs: Can a user craft inputs that cause the model to reproduce training data, expose other users’ data, or leak system configuration details? This is especially critical for an AI chatbot development company that builds where the conversational interface creates a natural attack surface.

Excessive agency controls: For agentic AI systems with tool use or write-back capabilities, does the system have granular controls on what actions it can take, on whose behalf, and with what approval requirements? Autonomous action without scope limits is a security incident waiting to happen.

Audit trail completeness: Can you reconstruct exactly what happened in any AI interaction: the input, the model version, the context retrieved, the output produced, and any actions taken? A cornerstone of all three frameworks – EU AI Act, NIST AI RMF, and OWASP is the requirement for comprehensive, immutable audit trails. You cannot secure or audit what you cannot see.

Related reading → Security Architecture for Healthcare Apps

Does Your AI System Meet the Compliance Requirements of the Markets You’re Deploying Into?

Ai driven audit readiness in 2026 means understanding that different markets have different requirements and that “we’ll deal with compliance later” is a strategy that consistently produces expensive retrofits and delayed launches.

Framework | Who It Applies To | Key Requirement | Enforcement |

EU AI Act | Any AI deployed to EU users | Risk classification, human oversight, and audit logs | August 2026, fines up to 7% of global revenue |

NIST AI RMF | US federal contractors have widely adopted privately | GOVERN, MAP, MEASURE, MANAGE functions | Voluntary but increasingly contractually required |

HIPAA (AI layer) | Healthcare AI handling PHI | BAA coverage for AI vendors, audit logging | Active enforcement, $100–$50,000 per violation |

SOC 2 Type II | B2B SaaS with enterprise buyers | AI usage controls are now standard in audits | Required for enterprise sales cycles |

ISO/IEC 42001 | Organizations seeking certifiable AI governance | AI management system documentation | Voluntary, increasingly required by procurement |

You might also like reading: How SMBs Can Integrate AI into Existing Software Systems

The Complete Pre-Production AI Audit Checklist

Before any AI system we build at Tech Exactly goes live, whether it’s for a healthcare platform, a FinTech application, or an enterprise SaaS product, it clears every item on this checklist. Use it as your own ai audit gate:

Data Layer

☐ Training data distribution documented and validated against expected production inputs

☐ Temporal relevance confirmed for data recency appropriate for the domain

☐ Bias and representation gaps identified and mitigated

☐ Data provenance and lineage fully documented

Model Performance

☐ Accuracy benchmarked across all meaningful subgroups

☐ Confidence calibration validated and expressed confidence correlates with actual accuracy

☐ Edge case and adversarial input testing completed

☐ Regression benchmarking against the current baseline process

Infrastructure

☐ P95 and p99 latency validated under production-equivalent load

☐ Failure modes documented with graceful degradation confirmed

☐ Scalability ceiling identified and tested beyond

☐ Cost projections modeled at 10x, 50x, 100x current volume

Security

☐ Prompt injection testing completed and mitigations documented

☐ Data exfiltration attack vectors tested and closed

☐ Excessive agency controls implemented and validated

☐ Immutable audit trail confirmed for all AI interactions

Compliance

☐ Risk classification under the EU AI Act confirmed

☐ BAA coverage confirmed for all PHI-touching AI vendors (if applicable)

☐ Model versioning documented to enable retrospective output attribution

☐ Human oversight mechanisms defined, documented, and tested

Monitoring

☐ Data drift detection configured with alert thresholds

☐ Output quality sampling process established

☐ Bias monitoring schedule defined

☐ Cost anomaly alerting configured

What Happens When You Skip the Audit

We’ll close with a number that should make every technical leader pause: Large enterprises lose an average of $7.2M per failed AI initiative and abandon 2.3 initiatives in 2025 alone. The cost of a structured pre-production ai audit, typically 2–4 weeks of engineering time, is a fraction of that. The cost of discovering a security vulnerability, a compliance gap, or a systematic model failure after launch is not.

The teams shipping production AI that users trust are not the ones moving fastest to launch. They’re the ones who move deliberately through an artificial intelligence audit process that treats every gap as a known risk, not a surprise to be managed after the fact.

At Tech Exactly, we bring this audit discipline to every AI engagement, whether we’re building as an AI app development company in USA, functioning as an AI powered mobile app development company on a mobile-first product, or operating as an AI chatbot development company for customer-facing conversational AI. If you’re preparing an AI system for production and want a partner who treats audit readiness as a product requirement, let’s talk.

Let's Start Your Project Today

Ready to build your App with us? Reach out now – our experts are just one click away.

Frequently Asked Questions

An AI audit is a structured pre-production evaluation that verifies your system is safe, accurate, and compliant before real users touch it. It covers five domains:

- Data quality: Is your training data clean, recent, and representative of real-world inputs?

- Model behavior: Does accuracy hold across edge cases, subgroups, and adversarial inputs?

- Infrastructure: Does the system perform under production load, not just demo conditions?

- Security: Are prompt injection, data exfiltration, and excessive agency risks tested and closed?

- Compliance: Are audit trails, human oversight mechanisms, and regulatory requirements documented and verified?

Standard software testing checks that deterministic code produces expected outputs. AI auditing is different because AI systems are probabilistic. They can produce subtly wrong outputs that don't trigger any error monitoring, and they degrade silently as data distributions shift after launch. Testing a set of cases isn't enough. You need to validate behavior across input distributions, configure continuous drift detection, and build the monitoring infrastructure that tells you when something starts going wrong, before users do.

More than most teams realize:

- EU AI Act (enforcement August 2026): risk classification, human oversight, immutable audit logs, fines up to 7% of global annual revenue for high-risk systems.

- NIST AI RMF: widely adopted across US federal contractors and increasingly required in enterprise procurement contracts.

- California AI Transparency Rules have been in effect since January 2026.

- SOC 2 Type II: auditors now routinely include AI usage controls as standard assessment items.

- HIPAA (for healthcare AI): BAA coverage required for every AI vendor touching PHI, with per-violation fines up to $50,000.

For a system with clean data documentation and an existing test harness 2 to 4 weeks. For systems with data quality issues, missing infrastructure documentation, or compliance requirements retrofitted after the build, expect 6 to 10 weeks, most of which is remediation time, not evaluation time. The ai audit itself is fast. Fixing what surfaces is where the time goes. That's exactly why we run it before launch, not after.

AI driven audit readiness means your organization has the systems, documentation, and processes in place to satisfy an auditor's questions at any point, not just when an audit is scheduled. It includes a live AI use case registry, versioned model documentation, immutable interaction logs, bias monitoring reports, and a defined incident response plan for AI failures. In 2026, screenshots and declarations are not sufficient evidence.

Manas Das, Mobile App Architect at Tech Exactly, has over 9 years of experience leading teams in iOS, Android, and cross-platform development. He specialises in scalable app architecture and GenAI-driven mobile innovation.