Build vs. Integrate AI in Healthcare Apps: A CTO’s Framework

Key Takeaways

- The build-vs-integrate decision in healthcare AI is not a one-time call; it evolves with your data maturity, regulatory posture, and product stage.

- Integration is the right default for conversational AI, workflow features, and MVPs where speed and budget matter.

- Custom AI builds are justified when you have proprietary data, need clinical-grade accuracy, or are pursuing FDA SaMD clearance.

- Most mature health-tech products run a hybrid, integrated LLMs for the conversational layer, custom models for the clinical core.

- Before deciding anything, run an AI readiness audit across four dimensions: data maturity, use-case clarity, regulatory scope, and engineering capacity.

Today, I will start with a scenario, as it captures this better than any framework slide.

A digital health founder, Series A, solid traction, a care navigation platform serving mid-size employer groups, called us last year in the middle of a board meeting. His CTO had just told the board they needed six months and $400K to build a proprietary AI model for their member engagement chatbot. A competing vendor had offered an integration that could go live in eight weeks for a fraction of the cost. The board wanted to know: which one?

As an AI App Development Company in USA, we feel neither option as presented was right. And the reason: nobody had asked the right questions first.

That tension: build vs integrate AI, is one of the most consequential architectural decisions a healthcare CTO makes today. Get it right, and you ship faster, spend smarter, and build something defensible. Get it wrong, and you either burn runway on a custom model your team isn’t ready for, or you wake up two years later entirely dependent on a third-party API that now holds your product’s core intelligence hostage.

Our founder, Hitesh, has watched this play out across dozens of health-tech engagements. His take: “Most teams make this decision based on what sounds impressive in a board deck, not what their product actually needs right now.” The companies that get it right treat this as a framework question, one with a clear, answerable logic, not a gut call.

That’s exactly what this post is. A CTO’s framework for making this decision with confidence, covering AI readiness, regulatory exposure, cost structure, use-case mapping, and the hybrid architecture that most mature healthcare AI products eventually land on.

Why This Decision Is More Nuanced in Healthcare Than Any Other Vertical

Healthcare isn’t a typical SaaS vertical. You’re not just shipping features, you’re operating in a space where a wrong AI output can harm a patient, expose your company to HIPAA liability, or trigger an FDA audit. That context changes everything about how you evaluate integrating AI vs. building it.

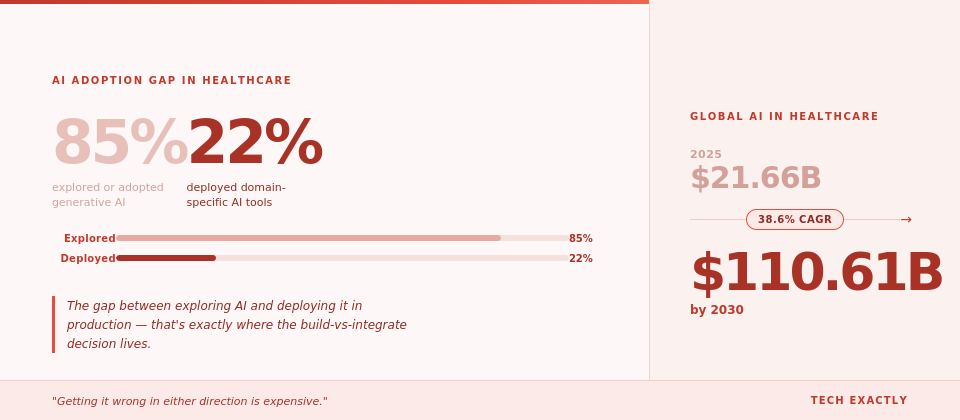

And the stakes are only growing. The global AI in healthcare market is projected to grow from $21.66 billion in 2025 to $110.61 billion by 2030 at a CAGR of 38.6%, which means competitive pressure to ship AI-powered features is only intensifying. But here’s the part that doesn’t make it into the press releases: while 85% of healthcare organizations have explored or adopted generative AI in some form, only 22% have actually implemented domain-specific AI tools. That gap between exploring AI and deploying it in production is precisely where the build-vs-integrate decision lives, and getting it wrong in either direction is expensive.

The general tech playbook says: don’t build what you can buy. Fair advice, unless what you’re buying introduces PHI risk, lacks explainability, or can’t be audited by a compliance team. In healthcare, the “buy/integrate” default comes with asterisks that most AI vendors bury in their ToS.

On the other hand, building custom AI from scratch is expensive, slow, and risky if your data pipelines aren’t mature. We’ve seen well-funded health-tech companies burn 12–18 months building proprietary models for use cases that a fine-tuned open-source model could have solved in six weeks.

Neither extreme is right. The right answer depends on a framework, and we’re going to walk you through ours.

First, Run an AI Readiness Check Before You Decide On Anything

Before you debate build vs. integrate, you need to be honest about where your product actually stands. We call this an AI readiness audit, and it covers four dimensions:

1. Data maturity

Do you have enough clean, labeled, structured clinical data to train or fine-tune a model? If your data lives in PDFs, inconsistent EHR exports, or hasn’t been de-identified, you’re not ready to build anything meaningful yet.

2. Use-case clarity

Is your AI use case well-defined? “AI-powered diagnostics” is not a use case. “Flagging abnormal lab values in diabetic patients and triggering a care manager alert” is. The more specific it is, the easier it is to evaluate whether an existing model solves it.

3. Regulatory scope

Does your use case fall under the FDA’s Software as a Medical Device (SaMD) guidelines? If yes, building custom AI means you’re also building a regulatory strategy. If you’re integrating a third-party model, you still own the clinical validation burden.

4. Engineering capacity

Do you have ML engineers on staff, or are you a product-and-full-stack team? This determines whether “build” is even on the table without outside help.

We wrote a detailed post on how to evaluate if your product is ready for AI, worth a read if you’re still unsure where you stand before making this call.

The Case for Integrating AI: When It’s the Right First Move

For most healthcare startups and early-scale companies, integrating AI is the right starting point, but only for the right use cases.

Here’s where integration wins:

- Generative and conversational use cases. If you’re building an AI chatbot for healthcare, a patient-facing symptom checker, a care coordinator assistant, or an insurance pre-auth bot, integrating a foundation model like GPT-4, Claude, or a healthcare-tuned variant (like Med-PaLM) is almost always faster, cheaper, and good enough. The models are already trained on vast medical literature. Your job is to provide prompt engineering, guardrails, and workflow design.

- Speed-to-market pressure. If you need to ship a validated MVP in 90 days, you don’t have time to train a model. Integration gives you a functional, demonstrable AI feature quickly, which matters when you’re raising your next round or closing an enterprise pilot.

- Lower-stakes support features. AI-powered scheduling, documentation summarization, billing code suggestions, or patient education content are real clinical workflow improvements that don’t require proprietary models. A well-configured integration with a reliable LLM provider handles them well.

- Budget constraints. Building custom AI models is capital-intensive. For context, when we break down healthcare app development costs by type, AI model development adds a significant layer on top of already complex infrastructure costs. Integration is a learner path while you validate PMF.

💡The trap to avoid: treating integration as a permanent strategy when your use case eventually demands model control. Many teams integrate first, ship, and then realize 18 months later that they need to build, and now they have technical debt layered on top.

When to Build Custom AI

Building proprietary AI in healthcare is worth the investment when three conditions are true: your data is unique, your use case is defensible, and your team has the capacity (or the right partner).

When your data is your moat.

If you’ve spent years aggregating condition-specific patient data that doesn’t exist in any public dataset – rare disease phenotypes, longitudinal behavioral health records, specialized imaging data, a custom-trained model is the only way to fully leverage it. No integration will give you that edge.

When clinical accuracy is non-negotiable.

Off-the-shelf models hallucinate. In consumer apps, that’s annoying. In clinical decision support, it’s dangerous. If your AI use case sits in a high-stakes diagnostic or treatment pathway, you need a model you’ve validated on your specific patient population, not a general model’s best guess.

When you need full explainability.

Payers, hospital systems, and regulators increasingly want to know why an AI made a recommendation. Black-box integrations often can’t give you that. Custom models can be built with explainability baked in, a requirement that’s becoming table stakes in enterprise health deals.

When regulatory strategy demands it.

If you’re pursuing a De Novo or 510(k) clearance, you’ll need to document your model’s training data, validation methodology, and performance benchmarks anyway. At that point, building custom gives you control over the exact evidence package you’ll need to submit.

We see this pattern often in behavioral health, where the build-or-buy decision involves unique population-level considerations that generic models simply aren’t calibrated for.

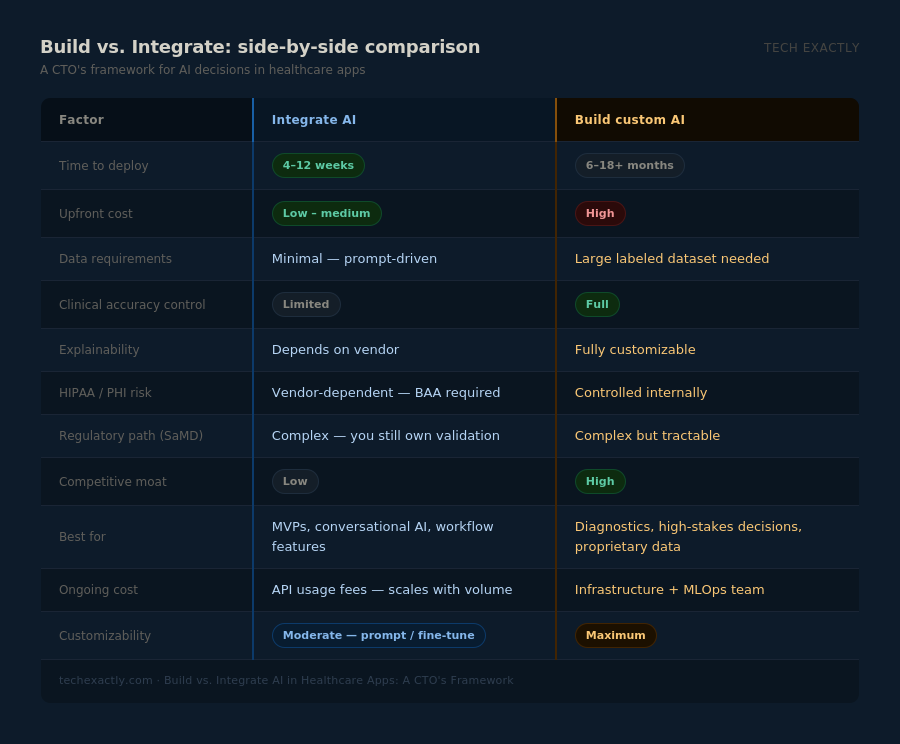

Build vs. Integrate: Side-by-Side Comparison

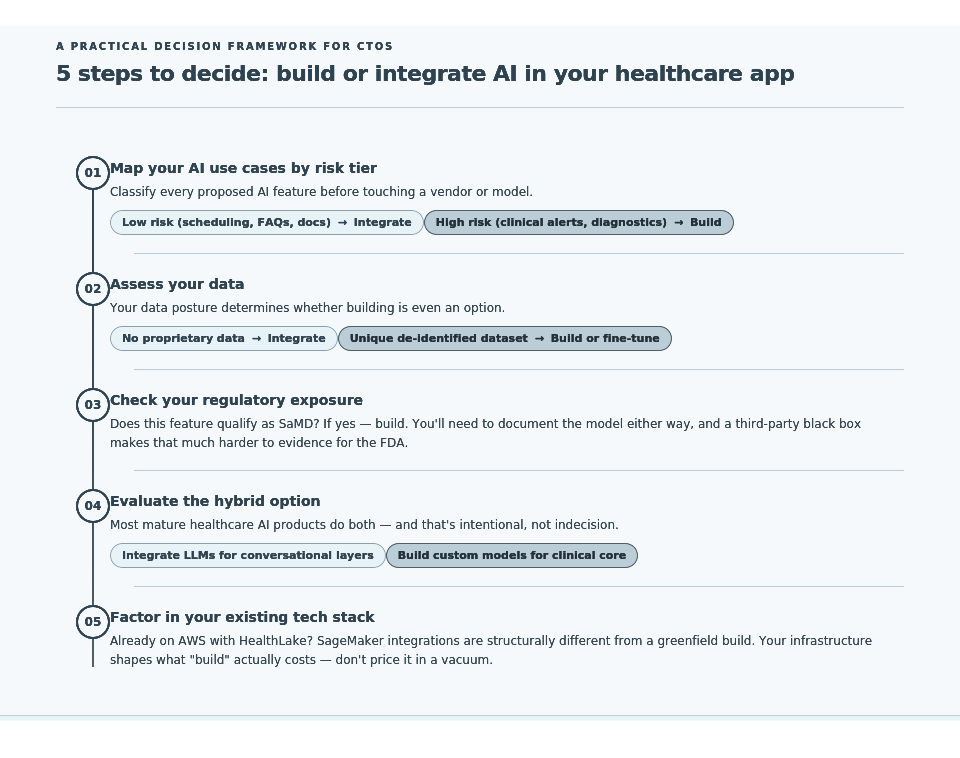

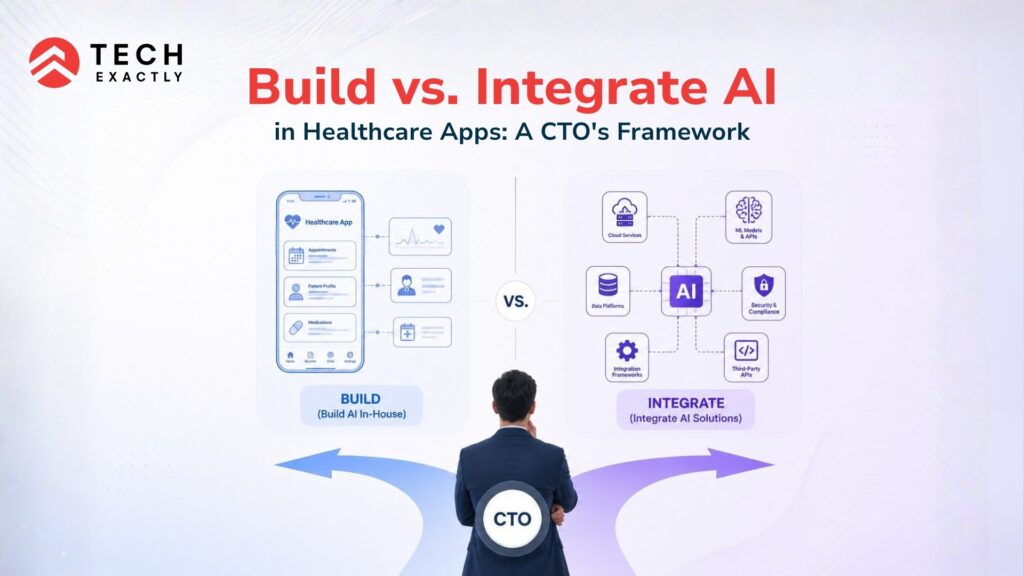

A Practical Decision Framework for CTOs

Knowing when to integrate AI and when to build it is what separates healthcare products that scale intelligently from ones that accumulate technical and compliance debt quietly. If you’re working with an AI app development company in USA or evaluating whether to bring this capability in-house, the biggest mistake we see is jumping straight to tooling decisions: which model, which vendor, which cloud, before the strategic logic is settled.

Here’s how we at Tech Exactly structure the actual decision:

Step 1 — Map your AI use cases by risk tier.

Low risk (scheduling, documentation, patient FAQs) → integrate. High risk (clinical alerts, diagnosis support, medication flagging) → evaluate the building seriously.

Step 2 — Assess your data.

No proprietary data? Integrate. Unique, de-identified, longitudinal dataset? Build or fine-tune.

Step 3 — Check your regulatory exposure.

Does this feature qualify as SaMD? If yes, build, because you’ll need to document the model either way, and a third-party black box makes that harder.

Step 4 — Evaluate the hybrid option.

Most mature healthcare AI products we work with do both. Integrate LLMs for conversational layers (patient chatbot, care manager assistant) while building custom models for clinical core (risk scoring, anomaly detection). This is often the right architecture for Series A and beyond.

Step 5 — Factor in your tech stack and the technologies underpinning your healthcare app.

If you’re already on AWS and using HealthLake, building integrations with SageMaker-hosted models is structurally different than if you’re a greenfield team starting from scratch. Your existing infrastructure shapes what “build” actually costs.

What We’ve Seen Work (and What Hasn’t)

Working as an AI app development company in USA across digital health, telemedicine, and behavioral health platforms, we’ve shipped both kinds of products. Here’s what Hitesh always comes back to when this debate surfaces internally:

“The question isn’t build or buy, it’s where does your product’s intelligence need to be irreplaceable? Protect that. Integrate everything else.”

The healthcare companies we’ve seen struggle are the ones that tried to build everything from scratch before they had validated their clinical workflows, and the ones that integrated everything without thinking about what happens when their AI vendor raises prices, changes their API, or gets acquired.

The ones who’ve thrived found the line between core and commodity. They integrated the commodity layers (conversational AI, documentation, scheduling) and built the core (their proprietary risk model, their condition-specific scoring logic, their population health engine).

If you’re running an AI-powered mobile app development strategy in healthcare and haven’t drawn that line yet, it’s worth doing now, before your architecture decides for you.

How to Start Integrating AI Into Your Healthcare App

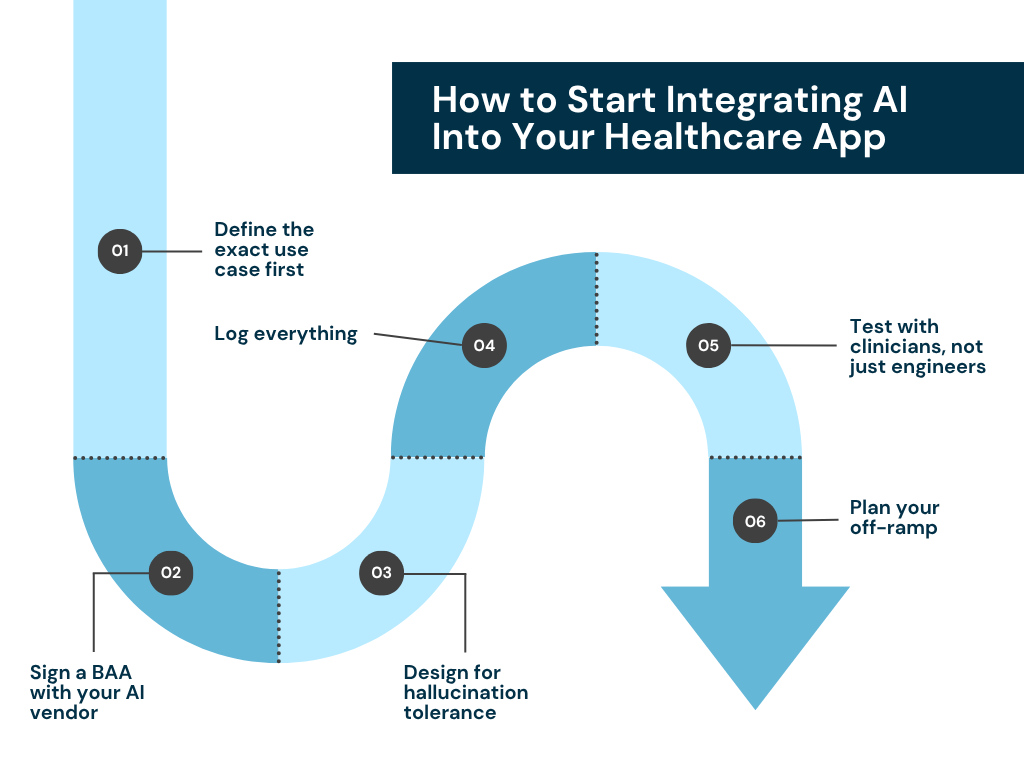

If you’ve decided that integration is your immediate path, here’s a practical starting checklist for how to integrate AI into an app in a healthcare context:

- Define the exact use case first. Don’t integrate “AI”, integrate a model for a specific, scoped function.

- Sign a Business Associate Agreement (BAA) with your AI vendor. OpenAI, Anthropic, Google, and AWS all offer these. No BAA = no PHI in the prompt.

- Design for hallucination tolerance. Build output validation, confidence thresholds, and human-in-the-loop review for anything clinical.

- Log everything. Audit trails are non-negotiable in healthcare. Every AI input/output needs to be logged with timestamps and user context.

- Test with clinicians, not just engineers. Your QA process needs clinical SMEs who can catch errors that a developer would miss.

- Plan your off-ramp. If this integration becomes a dependency, what’s your strategy if the vendor changes terms? Design with portability in mind from day one.

Final Thought

Build vs. integrate isn’t a one-time decision; it evolves with your product. Many of the best healthcare AI companies we work with started as pure integrations, proved clinical value, raised capital, and then selectively built proprietary models where it mattered most.

What matters is that you make the decision consciously, based on your data maturity, use-case risk, regulatory posture, and engineering reality. Not because integrating felt faster or because building felt more impressive.

If you’re mapping out your AI strategy and want a second opinion from a team that’s navigated this across behavioral health, telemedicine, and chronic disease platforms, we’d be glad to think through it with you. As an AI chatbot app development company and custom model partner, we’ve helped CTOs on both sides of this decision, and we’re pretty honest about which path makes sense when.

The right call depends on your product. Let’s figure out which one that is. Book a call with us now.

Frequently Asked Questions

AI readiness in healthcare comes down to four things: clean and sufficient data, a clearly scoped use case, an understanding of your regulatory exposure, and the engineering capacity to support the integration. If your data is unstructured or un-de-identified, you're not ready to build, and even integration needs guardrails. We cover the full readiness checklist in our AI readiness evaluation guide, but the short answer is: if you can't describe your AI use case in one specific sentence, start there before you write a single line of code.

Integrating AI means connecting your app to an existing model or API, think OpenAI, Anthropic, or a healthcare-tuned LLM, and configuring it for your specific workflow through prompts, fine-tuning, or retrieval-augmented generation. Building custom AI means training or fine-tuning a model on your own proprietary dataset, giving you full control over its behavior, explainability, and clinical validation. For most healthcare apps, integration handles the conversational and workflow layers well, while custom builds are reserved for high-stakes clinical features where accuracy, auditability, and data sovereignty are non-negotiable.

Look for a partner who has shipped in regulated environments before, not just general SaaS. Key signals: experience with HIPAA-compliant architecture, understanding of FDA SaMD guidelines, prior work in your specific sub-vertical (telemedicine, behavioral health, chronic care, etc.), and the ability to handle both integration and custom model work. At Tech Exactly, we work specifically at this intersection. We're an AI-powered mobile app development company with a healthcare-first lens, which means we're not starting from a blank slate on compliance, data pipelines, or clinical workflow design.

Yes, but it requires deliberate architecture. The most important step is signing a Business Associate Agreement (BAA) with every AI vendor who touches PHI, including your LLM provider. OpenAI, Anthropic, Google, and AWS all offer BAAs for enterprise tiers. Beyond that, you need to ensure that patient data is never sent to a model in an identifiable form unless you've confirmed the vendor's compliance posture, that all AI inputs and outputs are logged with audit trails, and that your chatbot has clear scope limitations.

For a well-scoped integration, say, adding an AI documentation assistant, a patient-facing chatbot, or a billing code suggestion feature, you're typically looking at 4 to 12 weeks, depending on your existing infrastructure and data readiness. Custom AI model development for higher-stakes clinical features runs 6 to 18+ months. The biggest time variable isn't the model; it's the data preparation, compliance review, and clinical validation work that surrounds it. If you're trying to plan a realistic timeline, our breakdown of healthcare app development costs and timelines by type gives a useful reference point for scoping AI-adjacent work.

Pallabi Mahanta, Senior Content Writer at Tech Exactly, has over 5 years of experience in crafting marketing content strategies across FinTech, MedTech, and emerging technologies. She bridges complex ideas with clear, impactful storytelling.