Vibe Coding a Healthcare App Sounds Great Until Your First HIPAA Audit

Key Takeaways

- AI coding tools accelerate healthcare app development by 20-40% — but only when used within a compliance-aware workflow. The speed gains disappear if you spend months remediating HIPAA violations.

- No major AI coding tool signs a BAA. Cursor, Replit, GitHub Copilot, Bolt, and Lovable all lack Business Associate Agreements. The LLM APIs behind them (OpenAI, Anthropic, Google) do offer BAA pathways — but the tools themselves don’t.

- Vibe coding is useful for prototyping, not production. Use it to validate user flows, test UI concepts, and build investor demos. Then architect the production system properly — reusing validated patterns, not the prototype code.

- FDA SaMD classification is the compliance risk nobody in the vibe coding conversation mentions. If your health app makes clinical recommendations, it may qualify as a Software as a Medical Device — and that triggers IEC 62304 lifecycle requirements that are fundamentally incompatible with unstructured vibe coding.

Consider a case where a founder spends time building a prototype on Cursor. By Sunday night, they will have a working patient intake form, a scheduling module, and a basic telehealth video flow. The demo looks promising. The investor deck is updated, and they have finally built a prototype in 48 hours, which is eventually highlighted in the pitch.

Six weeks later, someone asks about HIPAA compliance. The codebase has hardcoded test data with real patient names from a spreadsheet the founder copied over during testing. The AI-generated authentication module stores session tokens in local storage. There’s no audit logging. The video call recordings sit in an unencrypted S3 bucket with public read access because the AI suggested a permissive bucket policy, and nobody caught it.

This isn’t hypothetical. It’s a pattern we see regularly at Tech Exactly, where we build healthcare applications for startups. Founders come to us after their vibe-coded prototype is affected due to compliance. And now the rectification will cost more than building the app correctly the first time around.

The tools aren’t the problem. Cursor, Claude, and GitHub Copilot genuinely accelerate development. The problem is that AI coding tools don’t understand regulatory context. They optimize for working code, not necessarily for compliant code. And in healthcare, working code that isn’t compliant is a liability — not an asset.

This guide covers how to use AI coding tools for HIPAA-compliant app development without creating a compliance debt that costs 3x to fix later.

What Vibe Coding Actually Means (And What It Doesn't)

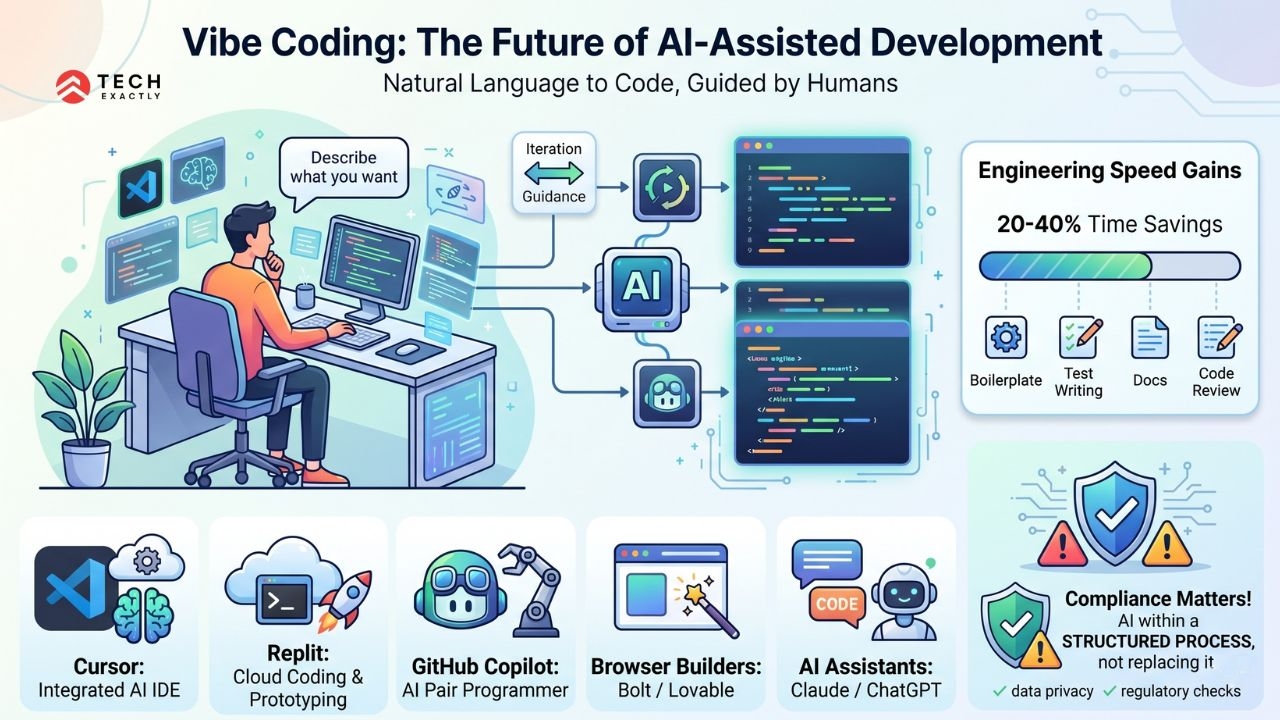

The term was coined by Andrej Karpathy in early 2025 and was named Collins Dictionary’s Word of the Year. Think of it as you describe what you want in natural language, an AI generates the code, and you guide it through iteration rather than writing every line yourself.

The tools that enable this are:

- Cursor: VS Code fork with deep AI integration. The most popular tool for serious vibe coding. It has a Privacy Mode that prevents code from being used for training, but this is not the same as HIPAA compliance.

- Replit: Cloud-based IDE with AI code generation. It’s good for fast prototyping. However, it explicitly states that standard hosting is not HIPAA-compliant.

- GitHub Copilot: AI pair programmer integrated into VS Code, JetBrains, and others. The enterprise tier may offer data residency controls, but HIPAA coverage requires direct negotiation with Microsoft.

- Bolt / Lovable: Browser-based app builders with AI generation. It has zero HIPAA awareness.

- Claude Code / ChatGPT: General-purpose AI assistants used for code generation via conversation.

The speed gains are really amazing. Tech Exactly’s engineering teams use AI tools in their workflow for boilerplate generation, test writing, documentation, and code review. We see 20-40% time savings on specific tasks. But we use them within a structured development process along with compliance checkpoints, and not as a replacement for the original process.

A precise differentiation matters while using AI tools within a compliant development workflow. Using AI tools instead of a compliant development workflow is how you might end up explaining things to the HHS Office for Civil Rights.

The BAA Problem: Why Your AI Coding Tool Can't Be HIPAA Compliant

HIPAA requires a Business Associate Agreement with any vendor that handles Protected Health Information (PHI). Your cloud provider, email service, as well as analytics platform needs one. None of the AI coding tools offer one.

| Tool | BAA Available? | PHI-Safe? | Notes |

|---|---|---|---|

| Cursor | No | Conditional | Privacy Mode prevents training on your code, but code still processed by cloud LLMs. No BAA. Safe only if zero PHI touches the codebase during development. |

| Replit | No | No | Cloud-hosted IDE. Code lives on Replit’s servers. Explicitly not HIPAA-compliant for hosting. |

| GitHub Copilot | Not standard | Conditional | Enterprise tier has data residency options. BAA requires direct negotiation with Microsoft. |

| Bolt | No | No | No HIPAA awareness. |

| Lovable | No | No | No HIPAA awareness. |

| Claude API (Anthropic) | Yes, for qualifying customers | Yes (API only) | BAA covers API usage, not the consumer Claude.ai product. |

| OpenAI API | Yes, healthcare addendum available | Yes (API only) | Covers API usage, not ChatGPT consumer product. |

| Azure OpenAI | Yes, under Microsoft BAA | Yes | Must use HIPAA-eligible services with proper configuration. |

| Google Vertex AI | Yes, under Google Cloud BAA | Yes | Must be within HIPAA-covered services. |

| AWS (hosting) | Yes | Yes | HIPAA-eligible services only, properly configured. |

| Supabase | Yes, BAA + HIPAA add-on | Yes | Requires both BAA execution and HIPAA add-on activation. |

AI coding tools don’t sign BAAs. However, the LLM APIs and cloud infrastructure behind them can. This means you can build a HIPAA-compliant app using AI-assisted development. But you need to architect the workflow so that PHI never touches the non-compliant tools.

💡 Expert Tip: The safest approach for AI-assisted healthcare development is to use Cursor with Privacy Mode enabled for code generation. That being said, you need to ensure your development environment uses synthetic test data (tools like Synthea generate realistic but fake patient records). PHI stays in production environments behind your cloud provider’s BAA, and the AI tools never see it.

Let's Start Your Project Today

Ready to build your Healthcare App with us? Reach out now – our experts are just one click away.

What AI-Generated Code Gets Wrong in Healthcare Apps

AI coding tools generate functional code. The reality is that functional isn’t the same as compliant. Here are the specific patterns we catch when reviewing Vibe-coded healthcare prototypes: Authentication and Session Management

What AI generates is a basic JWT authentication with tokens stored in localStorage. Session timeouts of 24 hours or none at all. No automatic logoff.

What HIPAA requires is an automatic session termination after a period of inactivity (the HIPAA Security Rule requires this under the Access Control standard, §164.312(a)(2)(iii)). Tokens stored in httpOnly cookies, not localStorage. Multi-factor authentication for users accessing PHI. Session logging with timestamps.

Remediation cost comes to around $3,000-$8,000 to retrofit proper session management into an existing codebase.

Audit Logging

What AI generates is an application-level logging for debugging such as console.log, error tracking via Sentry, and maybe some basic request logging.

What HIPAA requires is a holistic audit trail tracking who accessed what PHI, when, from where, and what they did with it. Audit logs must be tamper-evident, retained for 6 years, and reviewed regularly. This isn’t application logging but a compliance-grade audit system.

Remediation cost comes to around$5,000-$15,000 to add HIPAA-grade audit logging after the fact.

Data Encryption

What AI generates is a HTTPS-enabled (TLS in transit is good). Database encryption at rest is often missing or using default provider encryption without explicit configuration. Encryption key management via hardcoded keys or environment variables without rotation.

What HIPAA requires is AES-256 encryption at rest. TLS 1.2+ in transit (TLS 1.3 preferred). Encryption key management through a dedicated KMS (AWS KMS, Google Cloud KMS, Azure Key Vault) with key rotation policies. Field-level encryption for highly sensitive PHI.

Remediation cost comes to around $5,000-$12,000, depending on database architecture and how deeply unencrypted data has been translated.

Error Handling and Logging

What AI generates is a detailed error message returned to the client, like stack traces, database query details, and sometimes field values that include PHI. Error logging services receive unfiltered request/response data.

What HIPAA requires is a sanitised error response that never exposes PHI. Error logging that redacts or tokenizes sensitive fields before sending to any third-party service. The NIST SP 800-66 implementation guide specifically addresses this under Integrity Controls.

Remediation cost comes to around $2,000-$5,000 for error sanitization across an existing codebase.

Access Controls

What AI generates is a basic role-based access, like an admin and a user. Maybe a provider role. Broad permissions with most API endpoints accessible to any authenticated user.

What HIPAA requires is just the necessary access, where each user role should only access the PHI they need for their job function. Fine-grained permissions at the data level, not just the route level. Access reviews and deprovisioning workflows. Emergency access procedures with break-the-glass audit trails.

Remediation cost comes to around $8,000-$20,000 for a proper RBAC system with minimum necessary enforcement.

Total Remediation vs. Build-Right Cost

| Approach | Cost | Timeline | Risk |

|---|---|---|---|

| Vibe code first, remediate later | $60,000-$100,000 (prototype) + $30,000-$80,000 (remediation) = $90,000-$180,000 | 2-3 months prototype + 2-4 months remediation = 4-7 months | High since it may require architectural rebuilds, not just patches |

| Build compliant from day one | $75,000-$125,000 | 4-6 months | Low since compliance is baked into the architecture |

| Vibe code prototype, rebuild production | $15,000-$30,000 (prototype) + $75,000-$125,000 (production) = $90,000-$155,000 | 1-2 months prototype + 4-6 months production = 5-8 months | Medium since the prototype validates UX before production investment |

The third approach is what we recommend. Use vibe coding for what it’s good at, that is, for rapid prototyping, UX validation, and investor demos. Then build the production system properly, informed by what you learned from the prototype. You get the speed benefit of AI tools without the compliance debt.

A Practical Framework: AI Tools in a Compliant Development Workflow

The goal is not to avoid AI tools in coding but to use them in a way that maintains HIPAA compliance throughout the development lifecycle. Here’s how.

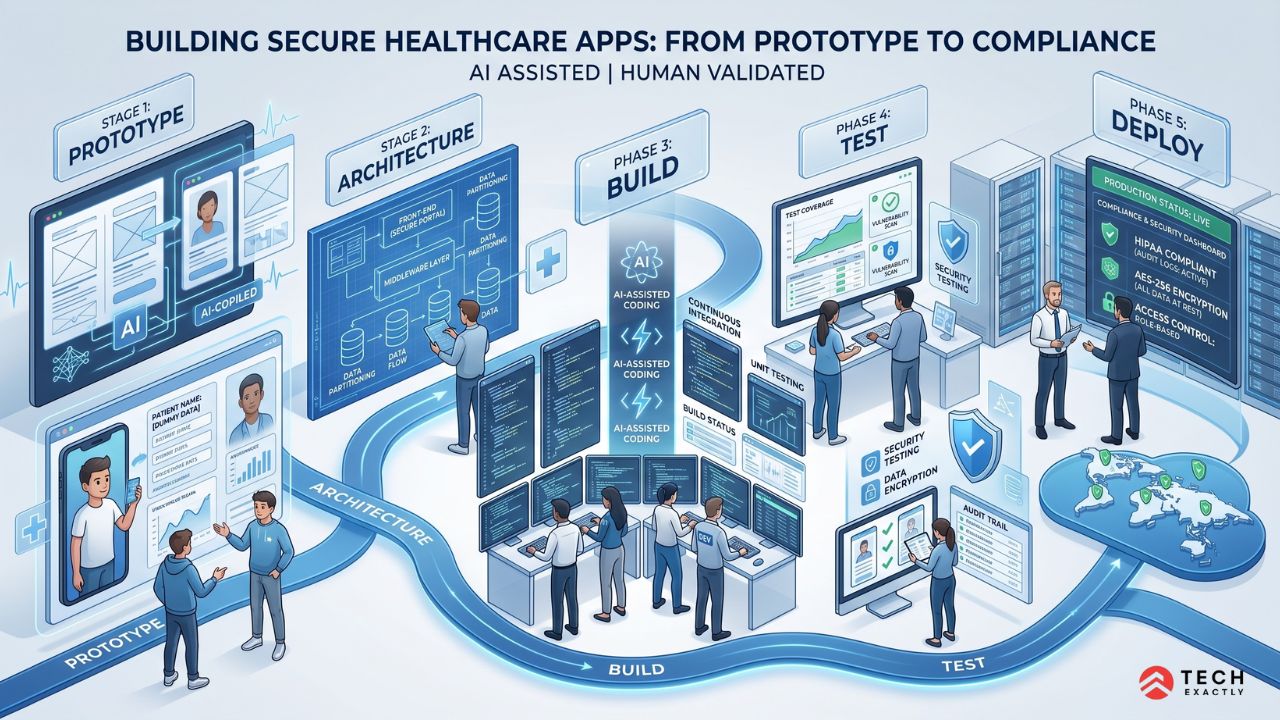

Phase 1: Prototype (Vibe Code Freely)

Tools: Cursor, Replit, Bolt, whatever gets you to a working demo fastest. Rules:

- Zero real patient data. Use Synthea for synthetic FHIR patient records, Faker for realistic but fake PII.

- A prototype is disposable as it validates UX and workflow assumptions, not production architecture.

- Don’t build authentication, encryption, or audit logging in the prototype. You’ll build these properly in production.

Output: A working demo that proves the concept. User feedback. Investor interest. Feature validation.

Phase 2: Architecture (Design Compliant)

Tools: Whiteboarding, architecture diagrams, threat modeling. AI tools can help draft architecture docs, but a human with HIPAA expertise reviews. What gets designed:

- PHI data flow mapping, where every place patient data is created, stored, transmitted, or accessed

- Encryption strategy (at rest, in transit, field-level for sensitive data)

- Authentication and authorization architecture (MFA, RBAC, session management)

- Audit logging architecture (what gets logged, where logs are stored, retention policies)

- Security architecture validation is what CTOs need to do before going live

- Infrastructure selection (HIPAA-eligible cloud services, BAA-covered vendors)

- Third-party vendor assessment (which services touch PHI, which need BAAs)

Phase 3: Build (AI-Assisted, Compliance-Gated)

Tools: Cursor with Privacy Mode, GitHub Copilot (Enterprise), AI-assisted code generation through API (Claude API or OpenAI API with BAA). Rules:

- AI generates boilerplate, CRUD operations, test cases, API scaffolding — the parts of development that are repetitive and well-defined.

- Security-critical code (auth, encryption, audit logging, access controls) is human-reviewed before merge. AI can draft it, but a developer with HIPAA expertise validates it.

- CI/CD pipeline includes automated compliance checks: dependency vulnerability scanning, secrets detection, PHI pattern matching in code and test data.

- Code review checklists include HIPAA-specific items: no PHI in error messages, no sensitive data in client-side storage, encryption verified, audit events present for all PHI operations.

Phase 4: Test (Verify Compliance)

Tools: Automated testing suites, penetration testing, HIPAA security risk assessment. What gets tested:

- Automated HIPAA compliance scanning (tools like Drata, Vanta, or Secureframe can automate portions)

- Penetration testing focused on OWASP Top 10 and healthcare-specific vulnerabilities (PHI exposure in APIs, insecure direct object references, authorization bypass)

- Security Risk Assessment using the ONC SRA tool, which is required under the HIPAA Security Rule

- Synthetic data verification to confirm no real PHI leaked into test environments

Phase 5: Deploy (Compliant Infrastructure)

Tools: AWS/GCP/Azure HIPAA-eligible services, all with signed BAAs. Requirements:

- BAAs executed with every vendor that touches PHI

Encryption verified in production (at rest and in transit) - Audit logging is active and monitored

Backup and disaster recovery are configured and tested - Incident response plan documented (breach notification within 60 days per HIPAA)

- Ongoing maintenance plan for security patches, dependency updates, and compliance monitoring

Let's Start Your Project Today

Ready to build your Healthcare App with us? Reach out now – our experts are just one click away.

When Vibe Coding Crosses the FDA Line

This is the compliance risk that every vibe coding article ignores. HIPAA governs patient data privacy. But if your app makes clinical decisions such as recommending treatments, interpreting lab results, or flagging diagnostic patterns, the FDA’s Software as a Medical Device (SaMD) framework applies.

The distinction is:

Not a medical device (built freely):

- Appointment scheduling

- Patient intake forms

- Secure messaging between patients and providers

- Billing and practice management

- General health education content

- Data display dashboards (showing information without interpreting it)

Potentially a medical device (requires regulatory strategy):

- Symptom checker that suggests diagnoses

- Algorithm that recommends medication dosing

- AI that reads medical images or lab results

- Mental health assessment tools that score patients

- Any software a clinician relies on for treatment decisions

If your app falls into the second category, you need IEC 62304 software lifecycle compliance. This includes documentation requirements, design controls, risk management per ISO 14971, and potentially a 510(k) submission to the FDA.

IEC 62304 requires traceability from requirements through design, implementation, testing, and release. You need to document why each piece of code exists and how it was validated. This is fundamentally incompatible with a “vibe and see what happens” development approach.

That does not mean you cannot use AI tools for SaMD development. It means you need a structured software lifecycle process that incorporates AI tools as productivity aids, not as the development methodology itself. The documentation burden is real, but it’s manageable within a professional development workflow. We have written about the broader challenges of healthcare software development, including regulatory compliance, interoperability, and scalability.

Beyond HIPAA: The Other Regulations That Apply

Every vibe coding and healthcare article treats HIPAA as the only regulation. It’s not.

FTC Health Breach Notification Rule

If your app collects health data but you are not a HIPAA-covered entity (most health and wellness apps aren’t), the FTC Health Breach Notification Rule applies instead. Breaches must be reported to the FTC and affected individuals. Penalties upto $50,120 per violation per day.

The FTC has been aggressively enforcing this. GoodRx paid $1.5 million for sharing user health data with advertising platforms. BetterHelp settled for $7.8 million. If your vibe-coded health app shares any user data with third-party analytics or advertising tools, you are exposed

State Health Data Privacy Laws

- Washington My Health My Data Act: Applies to any entity collecting health data from Washington residents. Requires consent before collecting, sharing, or selling health data. No HIPAA exemption.

- California CCPA/CPRA + CMIA: California’s Confidentiality of Medical Information Act adds protections beyond HIPAA for health data.

- Connecticut, Nevada, and other states: Emerging health data privacy laws with varying requirements.

21st Century Cures Act: Information Blocking

If your app connects to EHRs, the ONC’s Information Blocking rule applies. You cannot build workflows that prevent patients from accessing their own health data or prevent interoperability with other systems. This has architectural implications, particularly around API design and data portability.

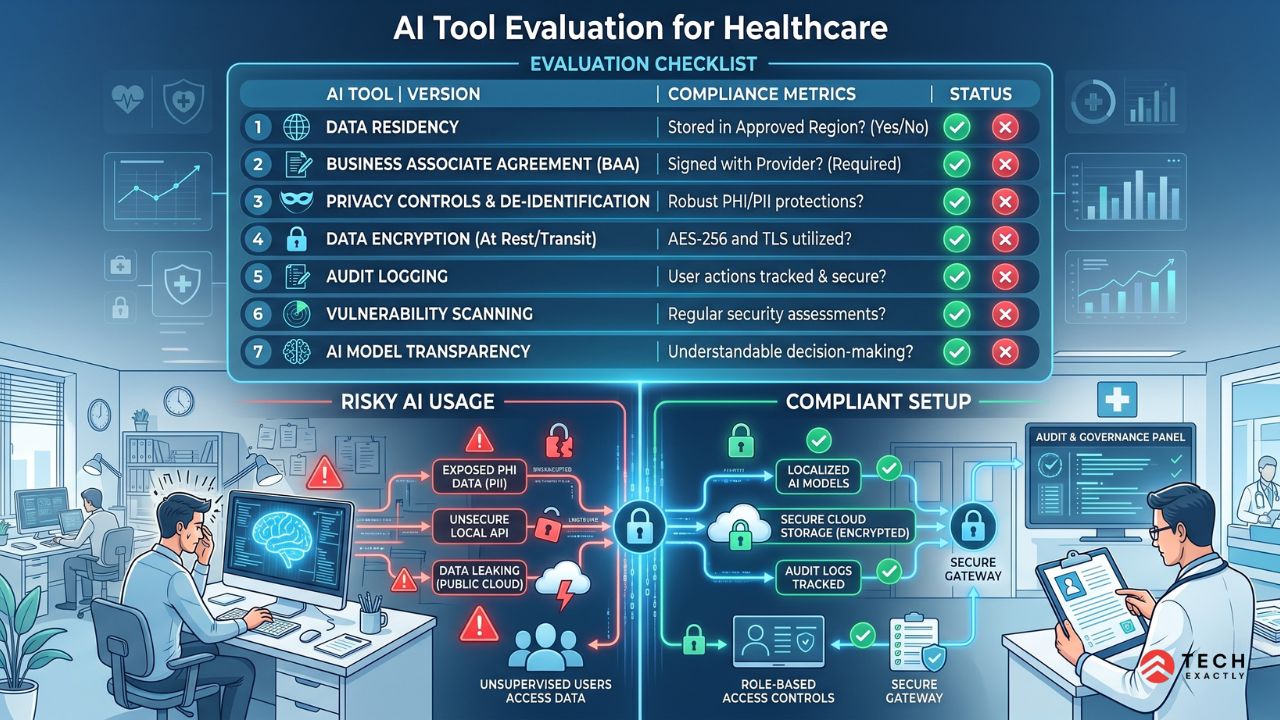

The Right Way to Evaluate AI Coding Tools for Healthcare

If you’re choosing tools for a healthcare development project, here’s what to evaluate:

| Criteria | What to Ask | Why It Matters |

|---|---|---|

| Data residency | Where does my code go when the AI processes it? | PHI in code (even test data leaks) creates HIPAA exposure |

| Training policy | Is my code used to train the model? | If yes, your healthcare logic is in the training set |

| Privacy controls | Can I disable telemetry and code sharing? | Cursor Privacy Mode, Copilot telemetry settings |

| BAA availability | Will they sign a BAA? | Required if the tool could ever process PHI |

| Self-hosting option | Can I run the model locally? | Self-hosted models (Ollama and Code Llama) eliminate data transmission risk |

| Audit trail | Does the tool log who generated what code? | Traceability matters for IEC 62304 and code review compliance |

| Enterprise controls | SSO, team permissions, usage policies? | Governance at the organizational level |

For teams building HIPAA compliant mobile apps, the safest stack is to have local AI tools (Cursor Privacy Mode or self-hosted models) for code generation, cloud infrastructure with BAA (AWS/GCP/Azure) for deployment, and HIPAA-specific testing automation in CI/CD.

Cost Comparison: AI-Assisted vs. Traditional Healthcare App Development

AI tools change the economics, but not as dramatically as the vibe coding hype suggests.

| Development Approach | Simple App (intake + scheduling) | Standard App (telehealth + EHR integration) | Complex Platform (multi-provider + billing + compliance) |

|---|---|---|---|

| Traditional development | $60,000-$90,000 / 4-5 months | $120,000-$200,000 / 6-10 months | $200,000-$350,000 / 10-14 months |

| AI-assisted (compliant workflow) | $45,000-$70,000 / 3-4 months | $90,000-$160,000 / 5-8 months | $160,000-$300,000 / 8-12 months |

| Vibe-coded then remediated | $70,000-$110,000 / 5-7 months | $130,000-$220,000 / 7-11 months | Not recommended, remediation at this scale is a rebuild |

AI-assisted development in a compliant workflow saves 15-25% on cost and 20-30% on timeline. That’s significant. On a $150,000 project, you are saving $25,000-$40,000 and 1 to 2 months.

The savings come from:

- Faster boilerplate and CRUD code generation (40-50% time reduction on these tasks)

- AI-assisted test writing (30-40% faster)

- Automated documentation generation

- Faster code review with AI-flagged issues

The savings do not come from:

- Skipping architecture design

- Eliminating compliance reviews

- Replacing experienced healthcare developers with AI

- Using AI for security-critical code without human review

Ready to Build a Healthcare App That's Fast and Compliant?

AI coding tools aren’t going away, and they shouldn’t. They make healthcare development faster and more accessible. But faster development without compliance is just faster liability creation.

At Tech Exactly, we use AI tools in our development workflow every day. We also build HIPAA-compliant healthcare applications that pass audits, meet FDA regulatory requirements when applicable, and don’t create compliance debt for our clients.

If you have vibe-coded a healthcare prototype and need to take it to production, or if you want to build right from the start with AI-assisted development, please reach out. We will assess where you are and what it will take to get to compliant, production-ready software.

Let's Start Your Project Today

Ready to build your Healthcare App with us? Reach out now – our experts are just one click away.

FAQs on Healthcare App

You can use Cursor to write code for a HIPAA-compliant app. However, this won't make your app compliant. Cursor doesn't sign a BAA and processes your code through cloud-based LLMs. Never put real PHI in your development environment; instead, use Cursor's Privacy Mode to prevent code training and ensure your production infrastructure (hosting, database, APIs) is covered by signed BAAs with HIPAA-eligible services. Replit is riskier since your code stays on their servers, and they explicitly state their standard hosting is not HIPAA-compliant.

Building HIPAA compliance into a new app from day one adds $15,000-$25,000 to a typical $60,000-$100,000 project. This covers encryption architecture, audit logging, access controls, BAA management, and security testing. Retrofitting compliance into an existing non-compliant app costs $30,000-$80,000 and takes 2 to 4 months, because it often requires architectural changes rather than just adding features.

It is, but not without significant hardening. Vibe-coded prototypes typically lack proper authentication, encryption, audit logging, access controls, and error handling. All these are required for HIPAA compliance. The recommended approach is to use vibe coding for prototyping and UX validation. Then you can build the production system using a compliant development process that you learned from the prototype.

The tools themselves don't create FDA risk, although what you build with them might create. If your app makes clinical recommendations, interprets diagnostic data, or supports clinical decision making, it may qualify as a Software as a Medical Device (SaMD) under the FDA's framework. SaMD requires IEC 62304 software lifecycle compliance, which requires documentation and traceability that unstructured vibe coding doesn't provide. You can still use AI tools for SaMD development, but within a structured lifecycle process.

Cursor with Privacy Mode is currently the strongest option for AI-assisted healthcare development. It runs locally (your code stays on your machine during editing), offers privacy controls to prevent code training, and integrates with multiple LLMs. For teams needing stronger guarantees, self-hosted models via Ollama (running Code Llama or similar locally) eliminate cloud data transmission. This is at the cost of reduced code quality compared to commercial models.

The FTC Health Breach Notification Rule applies to health apps that aren't HIPAA-covered entities (most consumer health apps). State laws add additional requirements, such as Washington's My Health My Data Act, California's CMIA, and others. If your app connects to EHRs, the ONC's Information Blocking rule under the 21st Century Cures Act applies. And if your app makes clinical recommendations, FDA SaMD classification may apply, triggering IEC 62304 and potentially 510(k) requirements.

Manas Das, Mobile App Architect at Tech Exactly, has over 9 years of experience leading teams in iOS, Android, and cross-platform development. He specialises in scalable app architecture and GenAI-driven mobile innovation.