Security Architecture for Healthcare Apps: What CTOs Must Validate Before Going Live

Key Takeaways:

- Healthcare is the most breached industry globally for 13 consecutive years. The average breach now costs $10.93 million per incident, nearly double the cross-industry average. (Source: IBM)

- 75% of healthcare organisations reported a cyberattack in 2023, and the majority of HIPAA violations traced back to access control gaps and missing risk analysis, not sophisticated external attacks

- HIPAA-compliant software development, GDPR for healthcare apps, and SOC2 Type I readiness are structural constraints, not project phases. They determine database design, API architecture, and vendor selection from day one

Most of the information you find on the internet about healthcare app security reads like it was written by someone who has never sat through a HIPAA audit. They list the usual frameworks: HIPAA, GDPR, SOC 2, and then hand you a checklist that assumes you’re starting from scratch, with a compliance team on standby and a six-month runway before launch.

That’s not the reality at all. CTOs building healthcare apps are dealing with sprint deadlines, investor timelines, EHR integration complexity, and a development team that is excellent at shipping features but has never had to defend an architecture to an OCR investigator.

At Tech Exactly, a healthcare app development company in USA, we have built HIPAA-compliant platforms, GDPR-regulated healthcare tools, and SOC 2-ready systems for clients in the USA and UK. What we’ve consistently seen is this: the security failures that sink healthcare apps are almost never due to exotic attacks. They are architectural decisions that nobody questioned in sprint one.

Why Healthcare Apps Keep Failing Compliance Audits in the USA and the UK

The root cause is the same on both sides of the Atlantic, and it has nothing to do with sophisticated attacks.

The HHS Office for Civil Rights publishes its HIPAA enforcement findings every year. The single most cited violation, year after year, is failure to conduct an accurate and thorough risk analysis. Not a data breach. Not an insider threat. A failure to ask the right questions before building.

From what we have read, this seems to be an architecture problem.

HIPAA compliant software development is not a layer you apply on top of a finished product. It shapes where PHI can live, who can access it, how every access is logged, which vendors are contractually permitted to touch it, and how you prove all of that when an auditor or a breach notification attorney arrives.

The ICO tells a parallel story in the UK. In its 2022–23 annual report, the majority of data protection failures investigated in the health sector traced back to inadequate technical controls and poor data governance.

GDPR for healthcare apps in the UK does the same, except the enforcement authority is the ICO, the lawful basis requirements are stricter for health data, and international data transfer rules post-Brexit add a layer that most US-origin teams miss entirely.

Every decision that defers these constraints moves the cost of compliance from design-time to remediation-time. In healthcare, remediation is measured in millions, and in the UK, in fines that can reach £17.5 million or 4% of global turnover, whichever is higher.

The teams that get this right, whether they’re building for a US hospital network or a UK NHS-adjacent platform, don’t have bigger compliance budgets. They make the architectural decision earlier.

To know more, read our blog on: US & UK Healthcare App Compliance: HIPAA, GDPR, FDA & UKCA

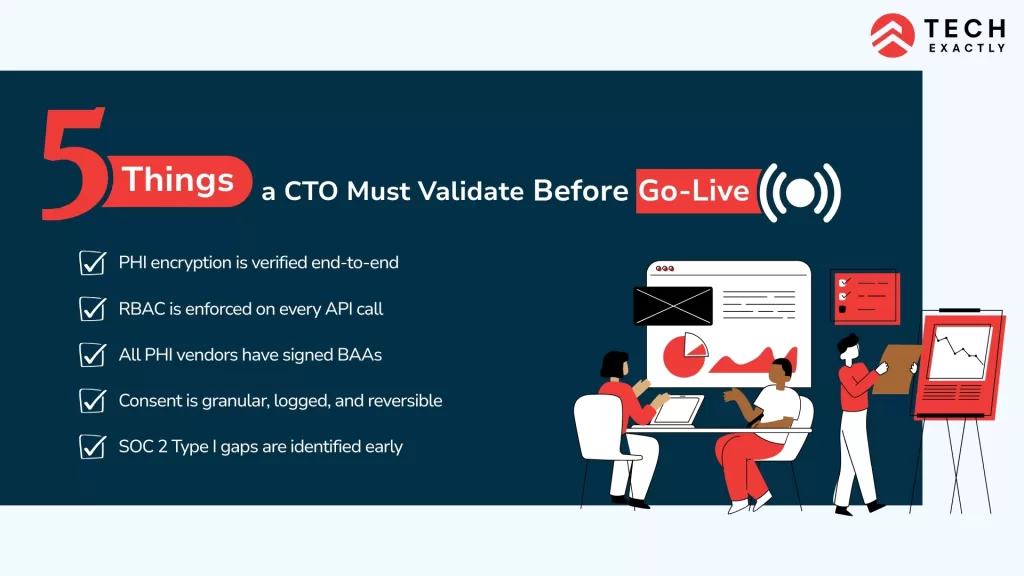

5 Things a CTO Must Validate Before Go-Live

Most of these get missed not because teams don’t know about them, but because they get deprioritised in ways that feel reasonable mid-sprint. By go-live, that window has closed.

1. PHI Encryption Is Verified Across the Full Data Path

AES-256 at rest, TLS 1.3 in transit, most teams know this. What breaks HIPAA compliant software development in practice is what happens around the encrypted storage. PHI leaking into application logs, error payloads, crash reporting tools, analytics pipelines, or third-party SDKs capturing request bodies that contain health data. Encryption at the database level means nothing if your session recording tool is capturing keystrokes in a symptom intake form. Validate the full data path, every system that touches PHI, not just the database.

2. Server-Side Role-Based Access Control Is Enforced on Every API Endpoint, Every Time

Frontend role checks are a UI convenience. They are not access control. Every API endpoint must validate the caller identity and permissions at the server layer on every single request. Healthcare management administrators, clinical staff, developers, and support personnel each require explicitly scoped minimum access, with every access event written to a tamper-evident, append-only audit log that the application itself cannot modify. Under HIPAA Technical Safeguards, this is non-negotiable. Under a soc2 type i review, an auditor will ask for evidence that these controls exist and work. “We had RBAC in the UI” is not evidence.

3. Every Vendor That Touches PHI Has a Signed BAA, Before They Receive Any Data

In HIPAA compliant software development, a Business Associate Agreement is a legal prerequisite for every vendor that handles, stores, transmits, or processes protected health information. Cloud providers, analytics platforms, push notification services, error monitoring tools, EHR integration partners, all of them. AWS, GCP, and Azure all offer HIPAA-eligible services and will sign BAAs, but you must request and execute them separately. The default contract relationship does not cover it.

▶️Note: One vendor without a BAA is a reportable HIPAA violation regardless of whether a breach actually occurred.

4. GDPR for Healthcare Apps Means Granular, Logged, Reversible Consent

For healthcare mobile app development services targeting UK or EU users: a single consent checkbox does not satisfy GDPR for healthcare apps. Health data requires explicit, specific consent per processing activity. The architecture must support consent withdrawal that immediately stops all processing, cascades to every downstream processor and data store, and is logged with a timestamp. It must also support Data Subject Access Requests within 30 days, which means every database, backup, analytics pipeline, and third-party system holding user data must be queryable and deletable through an automated workflow. If that is not architecturally possible on day one, it will not be possible at all.

5. SOC 2 Type I Gap Analysis Is Done Before the First Enterprise Sales Conversation

SOC 2 Type I certifies that your security controls are designed correctly at a point in time. It does not require months of operating history, that is Type II. But it does require documented controls, automated evidence collection pipelines, and a vendor review process. For healthcare apps selling to hospital systems, insurance networks, or enterprise clinical platforms in the USA or UK, a Type I report is a procurement prerequisite. Teams that discover this mid-sales-cycle, rather than before the first enterprise call, lose deals. Build toward it from sprint one, and it costs nothing extra. Discover it in month eight, and it costs weeks.

HIPAA Compliant Software Development: The Architecture Map Most CTOs Skip

HIPAA’s Security Rule organises its requirements into three safeguard categories. Most engineering teams engage deeply with the Technical tier and treat Administrative and Physical as someone else’s responsibility. They are not. The administrative gaps are where healthcare apps most consistently fail audits.

|

Safeguard Category |

What HIPAA Requires |

What the Architecture Must Deliver |

|

Administrative |

Risk analysis, workforce training, and access management policies |

IAM role definitions, documented access review cadence, data flow maps across all PHI touchpoints |

|

Physical |

Workstation controls, device, and media management |

Endpoint MDM, remote-wipe capability, encrypted local storage on any device that accesses PHI |

|

Technical |

Access controls, audit logging, data integrity, transmission security |

Server-side RBAC, append-only external audit logs, end-to-end PHI encryption, TLS 1.3 enforcement |

Three specific Technical controls that are consistently under-implemented across healthcare apps we review before launch:

- Automatic session logoff: a HIPAA Technical Safeguard requirement that gets routinely deprioritised as a UX discussion. Define the timeout value in the policy. Enforce it at the token lifecycle level, not in the frontend.

- Audit log tamper-evidence: writing access events to an application database that the app can modify is not a valid audit control. Logs must be written to an external, append-only system outside the application’s write scope: CloudTrail, a dedicated SIEM, or equivalent.

- Incident response testing: documented procedures that have never been tested against a simulated scenario are treated identically to no procedures under audit conditions. The documentation is not the control. The tested working process is.

💡 This is how we built a HIPAA-compliant online therapy platform for a New York-based client

Why SOC 2 Type I Comes Up in Every Enterprise Healthcare Sales Cycle

SOC 2 Type I is consistently underestimated as a business requirement. CTOs treat it as a compliance milestone. Enterprise procurement teams treat it as a vendor qualification gate. That mismatch is why deals stall.

Hospital systems, insurance networks, and large clinical platforms in both the USA and UK do not begin vendor onboarding without evidence of security control design. A SOC 2 Type I report answers that requirement. Teams that don’t have it ready are not evaluated and then asked to produce it; they are passed over for vendors who have it.

What SOC 2 Type I readiness requires before the audit:

- A documented control inventory mapping each Trust Service Criterion: Security, Availability, Processing Integrity, Confidentiality, Privacy, to a specific technical or administrative control in the architecture

- Automated evidence collection: access logs, encryption configuration exports, change management records, and vendor review documentation that can be produced without manual effort

- SOC 2 reports from your own critical third-party vendors: cloud provider, EHR integration partner, identity provider, because your controls don’t exist in isolation

💡 Here’s one example of how we built an IEC 62304-compliant medical device app, where the architecture had to simultaneously satisfy medical device software lifecycle requirements under IEC 62304 and HIPAA access control standards.

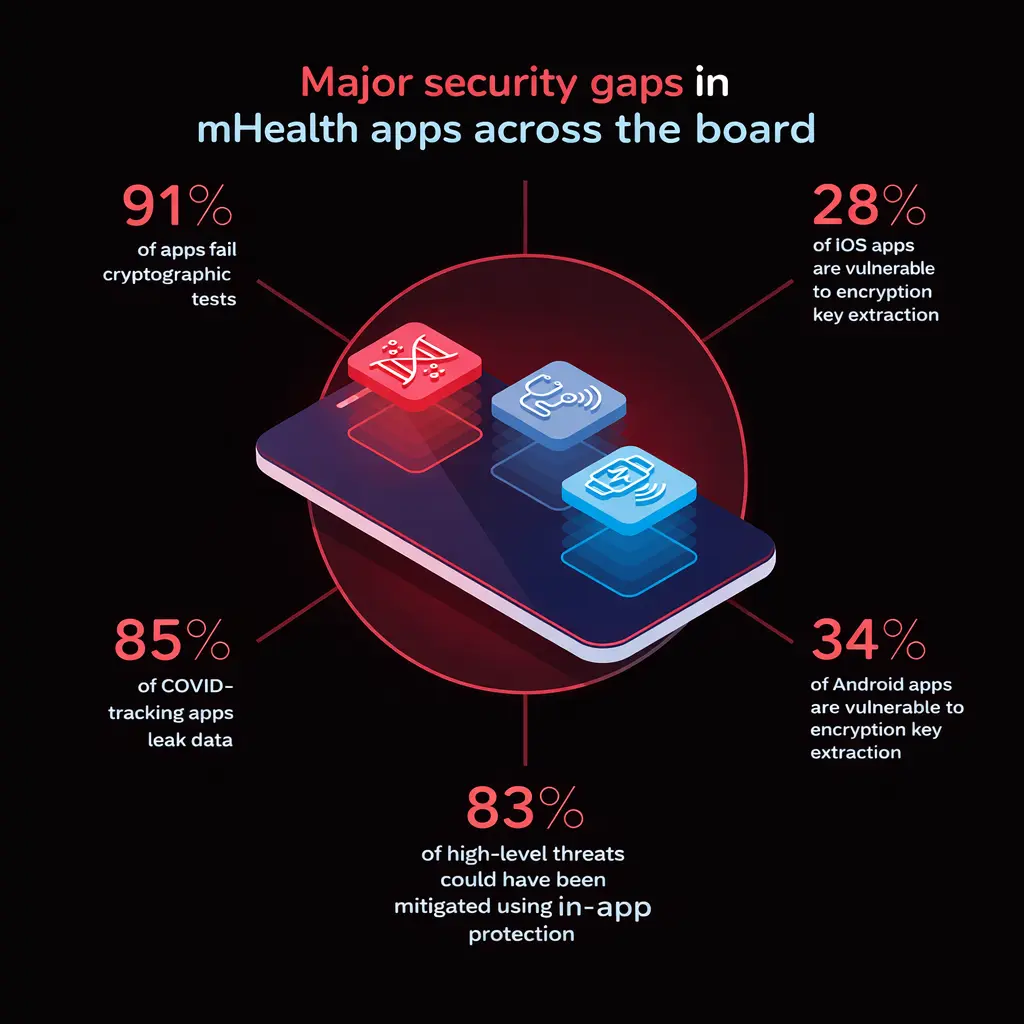

Pre-Launch Penetration Testing for Healthcare Apps: What Standard Pentests Miss

A standard web application pentest is not scoped correctly for healthcare apps. The scope must explicitly address PHI-specific attack vectors that do not appear in general application assessments.

The Verizon Data Breach Investigations Report consistently identifies web application attacks as the leading attack pattern in healthcare breaches, with Broken Object Level Authorisation (BOLA/IDOR) as the most prevalent API vulnerability in the sector.

Minimum pre-launch coverage for any HIPAA-governed or GDPR-regulated healthcare app:

- BOLA/IDOR testing: Can an authenticated user retrieve another patient’s records by modifying a resource identifier in an API request? This is the most common API vulnerability in production healthcare systems and the most frequently missed in generic pentests

- PHI in unintended locations: error responses, HTTP headers, log aggregation systems, analytics dashboards, and webhook payloads are consistent unintended PHI destinations in production environments

- Third-party SDK data flows: network traffic analysis during test sessions regularly identifies data being transmitted to vendor endpoints that was not documented in any integration spec

- Mobile-specific controls: certificate pinning validation, jailbreak and root detection bypass testing, and local storage encryption confirmation for iOS and Android builds

HIPAA requires periodic technical evaluations without specifying a fixed interval. In practice: a full assessment before launch, annually thereafter, and a targeted reassessment after any significant infrastructure or integration change.

Related reading → Top 10 Technologies Transforming Healthcare Mobile App Development in 2026

What to Look for in a Healthcare App Development Company in the USA or UK

A healthcare app development company in the USA or a healthcare app development company in the UK that genuinely understands regulated development will bring compliance architecture questions to the first technical conversation, before requirements are finalised, before wireframes are drawn, before a tech stack is selected.

Here is what to actually verify before signing a software development partner:

- They manage their own vendor BAA process and can document it. If a healthcare app development company cannot demonstrate how they handle BAAs within their own supplier relationships, they will not manage it within yours. Ask for it directly.

- Their development standards include server-side access control validation as a default, not as a security sprint added at the end of the project.

- Their pre-launch process covers authentication, data flow auditing, and third-party SDK analysis. Ask what their security review scope actually includes.

- They have shipped healthcare apps through real compliance reviews, HIPAA audits, ICO data processing assessments, or SOC 2 engagements, and can reference the outcome.

Healthcare mobile app development services built to these standards do not cost more to develop. They cost significantly less to remediate, defend under audit, and scale into enterprise sales cycles where compliance evidence is a procurement requirement from day one.

Final Thoughts

Building a secure healthcare app in 2026 is less of a compliance project that happens to involve technology, and more of an architecture decision that compliance follows from.

The CTOs who get this right are not the ones with the largest compliance budgets or the most experienced legal teams. They are the ones who decided in the first week of the project that HIPAA compliant software development, GDPR for healthcare apps, and SOC 2 Type I readiness were engineering requirements and structured every sprint accordingly.

The teams that treat compliance as a phase discover its cost at the worst possible time: a week before launch, mid-way through an enterprise sales cycle, or in an HHS investigation letter. At that point, the architecture decisions that need to be revisited are rarely small ones.

At Tech Exactly, we work with healthcare product teams from initial architecture through to production deployment and compliance readiness across HIPAA, UK GDPR, and SOC 2 Type I. We have built regulated healthcare systems for clients in the USA and the UK, navigated real audits, and learned precisely where the architecture decisions that look fine in development create serious problems in production.

If you are building healthcare apps for the US or UK market and want a partner who treats security as a starting constraint rather than a final review, feel free to contact us.

Let's Start Your Project Today

Ready to build your App with us? Reach out now – our experts are just one click away.

Frequently Asked Questions

If your app handles data from US patients or works with US-based healthcare providers, yes. HIPAA follows the data, not the geography of the development team.

Type I confirms your security controls are designed correctly at a single point in time. Type II confirms they actually worked over a period of 6–12 months. Enterprise healthcare clients typically ask for Type I first, so get that before the sales cycle opens, not after.

Not properly. GDPR for healthcare apps requires consent architecture, data flow controls, and deletion workflows that are built into the data model. Retrofitting them into a schema that was not designed for it is expensive, incomplete, and often creates more risk than it removes.

Before launch, annually after that, and after any significant change to the architecture or third-party integrations. HIPAA requires periodic technical evaluations in practice; annual is the minimum that holds up under scrutiny.

Not having a SOC 2 Type I report or a signed BAA process ready when procurement asks. Most enterprise hospital systems and insurance networks will not begin vendor evaluation without both. There is no "we'll send it next week"; the shortlist moves on.

Manas Das, Mobile App Architect at Tech Exactly, has over 9 years of experience leading teams in iOS, Android, and cross-platform development. He specialises in scalable app architecture and GenAI-driven mobile innovation.